Defining customer support in 2024: Why it’s key to your long-term success

Related Content:

- Intercom's customer service software

- Intercom's customer data platform

- Check out Intercom's product demos

- How to offer in-context support to your customers

- Strategies to take your customer support global

- Best practices for customer support

- The Ultimate Customer Support Stack for 2023

- Four beliefs shaping our vision for customer support

- How support metrics are evolving

Personal and contextual support interactions have become synonymous with a great customer experience. As AI transforms customer support, how will support teams unlock new opportunities to ensure that personal connection?

In the not-too-distant past, customer support was often seen as a necessary cost that was really just a tax on success. But with customer expectations on the rise, progressive companies are beginning to recognize world-class customer support as the powerful differentiator it is, and building customer-centric cultures that reflect its importance.

As leaders’ attitudes to customer service have changed, so has customer service software. Putting your customers front and center boosts customer retention, customer advocacy, and competitive advantage. Modern customer service tools must take the same customer-centric approach as the teams that use them to truly optimize the customer experience and realize these benefits.

What is customer support?

Customer support is the support you provide to your customers (and potential customers) throughout the customer journey. Its goal is to address customer needs by resolving issues, troubleshooting problems, and helping them to get the most from your product or service.

At a high level, the role of customer support is to ensure customers end every interaction with your brand happier than when they started. The range of services you offer should help your customers not just resolve their problems, but get the most out of your product.

Some companies interchangeably use terms such as customer service or even customer success, but while there can be subtle nuanced differences between those fields, the larger principle should remain the same – making sure your customers get the best value possible from your product.

“Every customer should feel like they’re involved in a one-to-one conversation with a business”

As AI becomes a fundamental part of every customer-facing team, the boundaries between these fields will blur further as teammates across customer service, customer success, and even sales, work together to map out the entire customer journey and create a seamless end-to-end experience for every customer.

Embracing modern customer support tools

Empowering your customer support team with customer support software and philosophies that allow them to connect and converse with your customers is key. Our Customer Service Trends Report 2024 revealed that just 18% of support teams believe the tools they use can fully support their needs all of the time.

As AI’s role in customer support continues to expand, it’s crucial that your team is equipped with the tools it needs to embrace the opportunities AI offers – and takes the time to think through the areas in which AI can enhance the customer journey and experience.

By recognizing the different skills and efficiencies both humans and AI bring to the table, you can build a human+AI partnership that will dramatically improve your customers’ experience with your brand, and set your business apart from your competitors.

“Every customer should feel like their needs are seen and prioritized by a business, and that support queries are treated as part of a broader relationship”

After all, customer support should be personal. Every customer should feel like their needs are seen and prioritized by a business, and that support queries are treated as part of a broader relationship. As a business, this requires responding knowledgeably, personally, and proactively within a reasonable period of time.

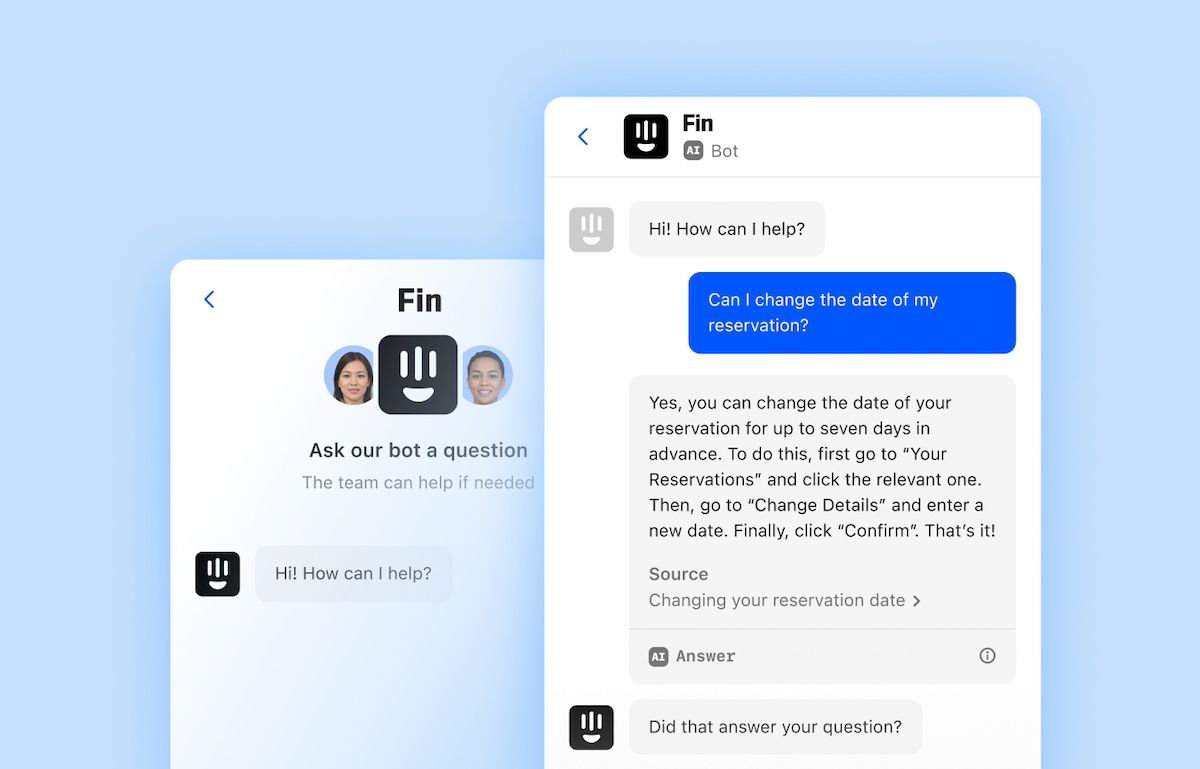

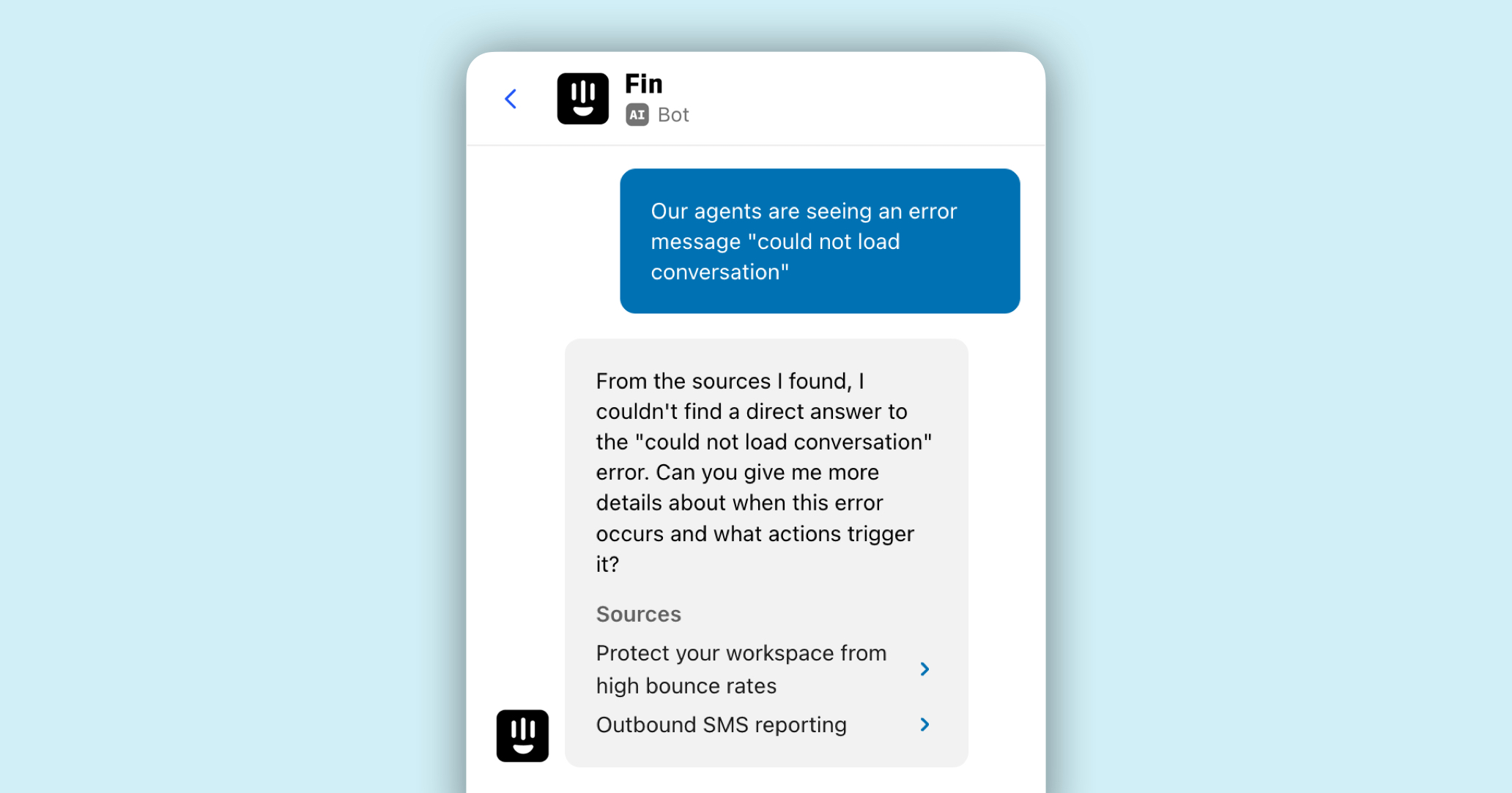

Now, with rapid advances in automation and AI, you can leverage tools like our AI chatbot, Fin, to provide trustworthy answers to your most commonly asked questions, dramatically improving the speed and efficiency of your customer support, and unlocking more time for your team to empathetically address more complex queries.

Your customers know best

Delivering consistent value means paying close attention to what customers want and need, and being willing to adapt and change direction where necessary. At Intercom, we’ve learned a lot from our customers’ opinions, one example being our evolving philosophy around ticketing systems.

We have always believed in the power of personal, conversational support, delivered via a world-class business messenger. We believed that ticketing systems existed to handle complex queries, and because solutions like Fin, our AI chatbot, our Messenger, and our Inbox provide that support fluidly, personally, and efficiently, we saw it as an unnecessary add-on.

“We realized we could build tickets the Intercom way – better addressing the needs of a modern support team while integrating seamlessly into an all-in-one support platform”

But, as time went on, we came to understand that there was something missing. While teammates can handle complex queries using Fin, Messenger, and Inbox, we still needed a way to represent customer requests as they progressed along a journey, and provide regular updates to both the customer and the agents involved. That’s what tickets do – they provide a way for businesses and their customers to track the “behind the scenes” work that needs to be done to action a customer’s request, and offer updates to the customer.

Following discussions with customers, our teams, and customer service experts, we realized how important a ticketing system is. It helps us gather the info we need to handle a customer’s request asynchronously, keep track of all those requests internally, and give our customers a way to follow up while they are waiting. But we also realized we could build tickets the Intercom way – better addressing the needs of a modern support team while integrating seamlessly into an all-in-one support platform.

Read more about our evolving philosophy around ticketing

The importance of customer support to your business

Fundamentally, we believe that to grow a great product you need:

- Happy customers

- Highly engaged customers

- Customers who stick around

- Customers who continuously provide feedback to improve the product

Each of those factors – customer happiness, engagement, loyalty, and feedback – can be influenced by support more than any other function of your business. In our recent report, 68% of C-level support execs said it’s harder to retain customers now than it was a year ago. In an era when unhappy customers have plenty of alternatives and can swiftly dent your reputation, it’s critical that you get customer service right.

“Customer happiness, engagement, loyalty, and feedback can be influenced by support more than any other function of your business”

Doing it right depends on a number of factors, but at its core it’s quite simple – set great expectations and aim to be prompt, answer questions with the right product knowledge, and do it all with a tone that backs up your brand. But to achieve these benefits, you must carefully define your approach to customer support.

Defining your customer support level

When a customer reaches out to your support team, everything that happens is an aggregation of small choices you’ve made. You’ve likely made active, conscious decisions about what kind of support you are going to offer based on values that you’ve arrived at earlier on in your customer service team’s evolution – but these decisions can carry unintended trade-offs that have a real impact on your customers. Here are some examples:

- During your regular hours of operation, a customer waits six hours for a response to a question.

Decision you made: You decided speed of response was not a priority. - Based in Indonesia, a customer submits a question and hears nothing for 16 hours.

Decision you made: You chose not to provide support in that time zone. - Incorrect information is provided, which fails to fix the problem.

Decision you made: You chose not to invest adequately in your help content so out-of-date advice is still being provided to customers. - Despite spending $2,000 a month, no one reaches out to a customer when they tell a support rep they are about to quit.

Decision you made: You decided not to have an escalation policy in place for when VIP customers are about to quit.

With AI in the picture, many of these tradeoffs are no longer necessary. An AI chatbot allows you to answer your customers’ most commonly asked questions, and triage or dive deeper into more complex ones, at any time and at near-instant speed. But adopting and embracing the power of AI doesn’t just require a shift in the way you work – it requires a mindset shift to move your team towards a way of working where AI and humans complement each other’s strengths, and take your overall customer experience to new heights.

Key features of your support

Here are some of the key features of your support that you get to design and that dictate the look and feel of your support offering to your customers. The advent of generative AI has changed the way we think about these features, but they’re as important as ever to ensure you’re delivering a fluid, consistent, and effective support experience that fits with your overall brand.

1. Style

What style of support are you going to provide? Some businesses go for the “one big answer” approach, trying to answer each customer contact with a comprehensive reply that covers every possible related scenario.

“AI chatbots are making it possible to deliver personal, conversational customer service at internet scale”

That answer may be five or six paragraphs long, include links to your documentation, and even have an embedded video about how to use the feature in question. It’s comprehensive but can also come across as impersonal and can leave the customer feeling unheard. With 87% of customer service teams saying that customer expectations are on the rise, this style of support might not cut it in today’s world.

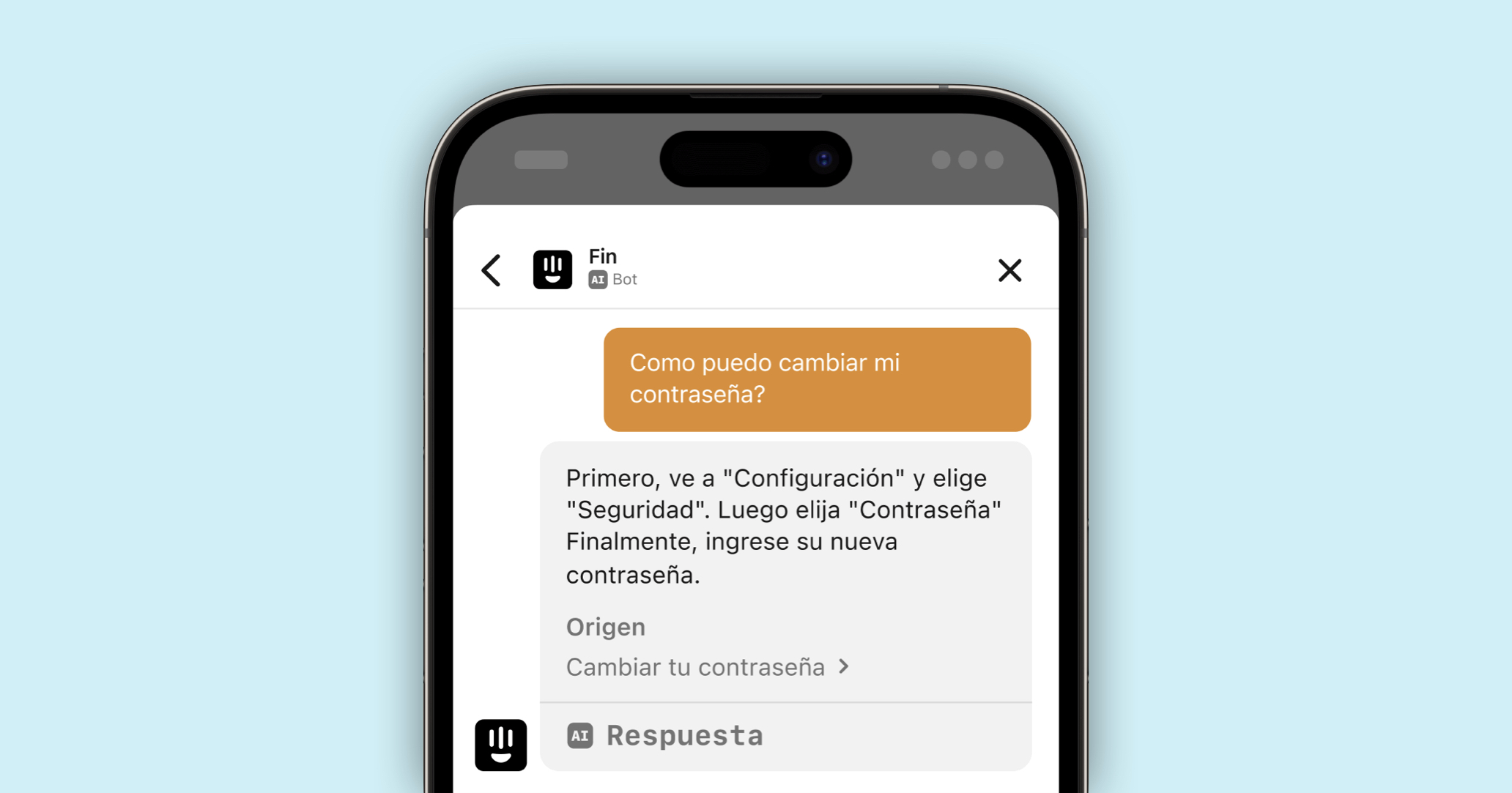

AI chatbots are making it possible to deliver personal, conversational customer service at internet scale. Some AI bots can quickly get to the crux of a customer question and deliver a tailored response, as well as offering source material, asking clarifying questions, and directing a request towards the appropriate team. That leaves your support team with more time to have real conversations with your customers – whether that’s via email, through in-app messaging, or over the phone.

2. Voice and tone

Closely related to the style of support you plan to offer is the manner in which you plan to speak to customers. You need to think about your company voice (formal and reserved or relaxed and chatty?) as well as the tone for different scenarios, e.g. responding to a customer who’s been overcharged compared to chatting to a customer on Twitter. Some of the questions you might want to ask yourself include:

- How formal do you want your customer communications to be?

- Are you going to adopt a conversational tone and encourage engagement?

- Should you utilize modern communication trends such as emojis and gifs?

- While it’s important to have these guidelines in place you don’t want to be too prescriptive either. Let your support agents’ individual voices shine through while ensuring adherence to your overall brand voice.

AI tools can help your support team to adjust their tone and overall approach to customer communications. For example, Intercom’s Fin allows agents to customize tone of voice and make a response friendlier or more professional, and will soon allow teams to deliver replies automatically in their business’s specific tone of voice.

3. Quality

It may seem subjective but you also make choices about the quality of your support. Who you hire and the tools you use are crucial to the quality of the support you can offer. At Intercom, we believe that a strong human+AI partnership is crucial to delivering a world-class customer experience, and to standing out from the crowd of competitors.

“Your team’s expertise and skills will become even more important as they focus on more complex – and often, emotionally charged – customer queries and problems”

AI chatbots can get up to speed on your support content near instantly, allowing them to start accurately answering your customers’ simple queries straight away – and leaving your support team to address the more complex problems and keep your help center up to date with fresh content.

As a result, your team’s expertise and skills will become even more important as they focus on more complex – and often, emotionally charged – customer queries and problems. Customer service agents’ ability to be resilient, empathetic, able to effectively manage situations, and to thrive under pressure while continuing to be positive and optimistic, will be more valuable than ever.

4. Speed

In an ideal world, all customers would have a real-time conversation with a friendly and knowledgeable support rep every time they had an issue. But that’s just not realistic at internet scale.

Or is it? Now that generative AI is transforming support, every customer query can be met quickly and personally by an AI chatbot, which can then either answer the question or triage it and send it to the right team, saving time for both your agents and your customers. For example, Fin, our AI chatbot, can resolve up to 50% of queries near-instantly.

“Speed, like coverage and language support, can sometimes be a money problem”

Speed, like coverage and language support, can sometimes be a money problem. AI offers you the opportunity to scale your business without having to scale your support team at the same rate, freeing up a lot of time and bandwidth for support teams to add value in ways that truly uplevel the customer experience, instead of spending their time firefighting in the inbox.

And if you’re not using AI to create more value for your customers and your business, your competitors probably are. Where we used to have to hire more staff to provide speedy, multi-lingual, 24/7 support, AI can now handle that for us. That’s a fundamental change to the way customer service is delivered.

5. Coverage

Are you going to provide support 24 hours a day, 7 days a week? Or do you think Monday to Friday from 9 am to 5 pm will suffice? Just remember, even the most business-focused enterprise software products get usage out of office hours. What holidays are you going to observe? And will you provide skeleton cover during holiday periods or none at all?

AI makes these decisions easier by facilitating 24/7 support without scaling headcount. But you’ll still have to think about what questions you’ll want your chatbot to answer while you’re out of office – and ensure your knowledge base can enable your chatbot to provide accurate, up to date answers.

Consider the journey you want your customers to take while your support team is away, and supply your AI bot with the material it needs to maximize customer satisfaction.

6. Language

What languages to support and when to start supporting them can be a tricky decision, but one that’s becoming easier thanks to AI. The addressable market for organizations today has significantly increased thanks to the internet, and AI chatbots allow us to properly support those markets without dramatically scaling our teams.

Fin now provides support in more than 45 languages – a game-changer for smaller businesses looking to break into new markets around the world.

7. Process

If you don’t have robust processes in place things will break as you scale and your quality of customer support will suffer as a result.

You will need to make sure team members feel empowered to make the decisions that are needed. At the very least you want to make sure you have processes in place around:

- Emergencies: How do you define an emergency and who gets notified? How are they informed and when?

- Escalation: Needs to be defined for every situation, not just emergencies e.g. for product bugs, when do you need to pull in a product engineer? This is especially important if your team is working with an AI chatbot – how can you reassure customers that they can speak to a human if they need to, and what workflows should you put in place to make sure that pathway is clear?

- Communication: How do members of the team find out about things?

- Refunds: Under what circumstances will you issue them and who processes them?

- Security: For instance, if someone asks to reset their password how do you verify their identity?

While your processes will need to change and evolve as you grow, it’s much easier to put them in place early than try and graft processes onto work practices that have developed organically and are ingrained in your support team’s culture. As the team expands, simple, sensible processes make it easier for everyone to do a great job. No one is left wondering what they need to do – it’s clear what is required in a number of defined situations.

Examples of great customer support

Customer support is in our DNA, and we’re always excited to see our customers get creative with theirs and put many of our features on the frontline of their service. Let’s take a look at some of our favorite examples:

Hospitable

Hospitable automates the management of your short-term rentals, directly on your booking channel. Their customers are hosts all over the world who may need to contact support at any time. They were excited to use Fin, our AI chatbot, to offer high-quality support, 24/7.

“The handovers between Fin and our support team feel very natural and seamless for our customers, which is a great experience for them. In fact, in a recent poll of new customers, we found that 61% preferred to opt for the faster responses of AI vs waiting to speak with a customer support agent.” — Pierre-Camille Hamana, CEO and Founder of Hospitable.

Increasing efficiency while preserving customer trust has allowed Hospitable to scale its operations without increasing headcount, and reduce response times by 95%.

Wolt

Wolt is a food delivery company specializing in real-time logistics optimization. With 3,000 people on the support team, and millions of customers and courier partners across 25 countries, Wolt needed a seamless, efficient, and easy-to-use customer service platform that would enable them to create personal experiences at scale.

The team at Wolt aim to deliver a premium experience for their customers, and fast response time is a priority. Intercom was Wolt’s first choice when the team decided to implement messenger-based support.

“Customers are used to receiving their Wolt orders accurately and quickly. If problems occur – and unfortunately, sometimes they do – we need to be able to solve those even faster. We manage over a million conversations every week, have an average response time of less than 60 seconds, and our CSAT is way over 90%. Intercom enables us to do that.” — Pelle Blarke, International Strategy and Operations Manager at Wolt.

Rebag

Rebag is an e-commerce platform and retailer that buys, sells, and trades luxury handbags and accessories. The team needed a communications platform that would enable them to merge their traditional retail and e-commerce shopping experiences and optimize every stage of the customer journey, from checkout conversions, to ongoing engagement, to support.

Rebag now uses Intercom to power communications at every stage of the customer lifecycle. The team is able to meet customers where they are, nurture them through the buying journey, and provide stellar support along the way. With Intercom’s Support solution, Rebag is keeping median first-response time to under a minute and has increased NPS by 15 points.

“We know that customers want a unique and memorable experience, so partnering with Intercom was the right decision for our team because it has allowed us to think big and deliver that in a seamless manner.” — Geronimo Chala, Chief Consumer Officer, Rebag.