Before ChatGPT rolled onto the scene a year ago, artificial intelligence (AI) and machine learning (ML) were the mysterious tools of experts and data scientists – teams with a lot of niche experience and specialized domain knowledge. Now, things are different.

You’re probably reading this because your company has decided to use OpenAI’s GPT or another LLM (large language model) to build generative AI features into your product. If that’s the case, you might be feeling excited (“It’s so easy to make a great new feature!”) or overwhelmed (“Why do I get different outputs every time and how do I make it do what I want?”) Or maybe you’re feeling both!

Working with AI might be a new challenge but it doesn’t need to be intimidating. This post distills my experience from years spent designing for “traditional” ML approaches into a simple set of questions to help you move forward with confidence as you start designing for AI.

A different kind of UX design

First, some background on how AI UX design is different from what you’re used to doing. (Note: I’ll be using AI and ML interchangeably in this post.) You might be familiar with Jesse James Garrett’s 5-layer model of UX design.

Jesse James Garrett’s Elements of User Experience diagram

Garrett’s model works well for deterministic systems, but doesn’t capture the additional elements of machine learning projects which will affect the UX considerations downstream. Working with ML means adding a number of additional layers into the model, in and around the strategy layer. Now, in addition to what you’re used to designing, you also need a deeper understanding of:

- How the system is built.

- What data is available to your feature, what it includes, how good and reliable it is.

- The ML models you’ll use, as well as their strengths and weaknesses.

- The outputs your feature will generate, how they will vary, and when they will fail.

- How humans might react differently to this feature than you’d expect or want.

Instead of asking yourself “How might we do this?” in response to a known, scoped problem, you might find yourself asking, “Can we do this?”

Especially if you’re using LLMs, you’ll likely be working backwards from a technology that unlocks entirely new capabilities, and you have to determine whether they’re appropriate for solving problems you know about, or even problems you’ve never considered solvable before. You might need to think at a higher level than usual – rather than displaying units of information, you might want to synthesize large amounts of information and present trends, patterns, and predictions instead.

“You’re designing a probabilistic system that is dynamic and that reacts to inputs in real time”

Most importantly, instead of designing a deterministic system that does what you tell it to do, you’re designing a probabilistic system that is dynamic and that reacts to inputs in real time – with outcomes and behaviors that will be unexpected or unexplainable at times, and where weighing tradeoffs might be a murky exercise. This is where my set of five key questions comes into play – not to provide you with answers, but to help you take the next step in the face of uncertainty. Let’s dive in.

1. How will you ensure good data?

Data scientists love to say “Garbage in, garbage out.” If you start with bad data, there is generally no way you will end up with a good AI feature.

For instance, if you are building a chatbot that generates answers based on a collection of information sources, like articles in an online help center, low-quality articles will ensure a low-quality chatbot.

When the team at Intercom launched Fin in early 2023, we realized that many of our customers didn’t have an accurate sense of the quality of their help content until they started using Fin and discovered what information was or wasn’t present or clear in their content. The desire for a useful AI feature can be an excellent forcing function for teams to improve the quality of their data.

So, what is good data? Good data is:

- Accurate: The data correctly represents reality. That is, if I’m 1.7m tall, that’s what it says in my health record. It doesn’t say I’m 1.9m tall.

- Complete: Data includes the required values. If we need height measurement to make a prediction, that value is present in all patients’ health records.

- Consistent: Data doesn’t contradict other data. We don’t have two fields for height, one saying 1.7m and the other saying 1.9m.

- Fresh: Data is recent and up-to-date. Your health record shouldn’t reflect your height as a 10-year-old if you’re now an adult – if it has changed, the record should change to reflect it.

- Unique: Data is not duplicated. My doctor shouldn’t have two patient records for me, or they won’t know which one is the right one.

It’s rare to have a ton of really high quality data, so you might have to make a quality/quantity tradeoff when developing your AI product. You may be able to manually create a smaller (but hopefully still representative sample) of data, or filter out old, inaccurate data to create a reliable set.

Try to start your design process with an accurate sense of how good your data is, and a plan for improving it if it’s not great at the start.

2. How will you adjust your design process?

As usual, it’s useful to start with a low-fidelity exploration to determine your ideal user experience for the problem you’re hoping to solve. You’ll likely never see it in production, but this north star can help align you and your team, get them excited, and also provide a concrete starting point to investigate how feasible it actually is.

“Spend some time understanding how the system works, how the data is collected and used, and whether your design captures the variance you might see in model outputs”

Once you have this, it’s time to design the system, data, and content outputs. Go back to your north star and ask “Is what I designed actually possible? What are some variations in case X or Y doesn’t work well?”

Spend some time understanding how the system works, how the data is collected and used, and whether your design captures the variance you might see in model outputs. With AI, a poor output is a poor experience. In the chatbot example, this might look like an answer that doesn’t give enough detail, answers a tangential question, or doesn’t clarify the question when it should.

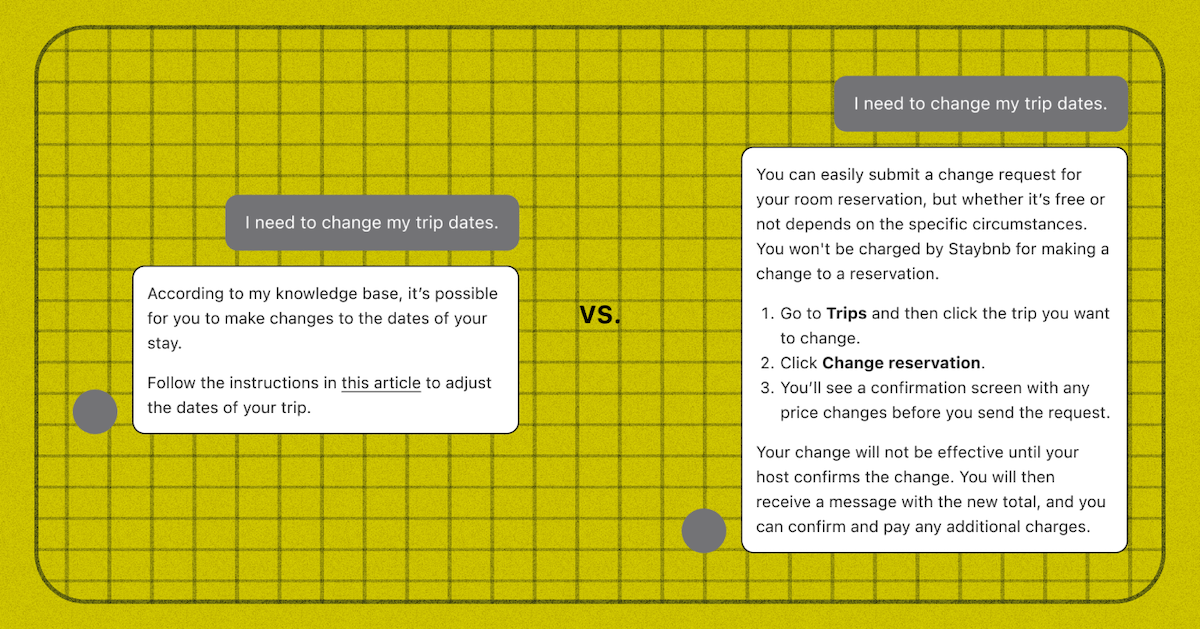

Two examples of how an AI chatbot’s output can be displayed

Two examples of how an AI chatbot’s output can be displayed

In the illustration above, the example on the left is similar to many early outputs we saw when developing our Fin chatbot, which were accurate but not very informative or useful because they referred back to the original article instead of stating the answer inline. Design helps you arrive at the example on the right, which has a more complete answer with clear steps and formatting.

Don’t leave the contents of the output to your engineers – the experience of it should be designed. If you’re working on an LLM-based product, this means you should experiment with prompt engineering and develop your own point of view on what the shape and scope of the output should be.

You’ll also need to consider how to design for a new set of potential error states, risks, and constraints:

Error states

- Cold start problem: Customers might have little or no data when they first use your feature. How will they get value right from the beginning?

- No prediction: The system doesn’t have an answer. What happens then?

- Bad prediction: The system gave a poor output. Will the user know it’s wrong? Can they fix it?

Risks

- False positives, like when the weather forecast predicts rain, but it doesn’t rain. Will there be a negative outcome if this happens with your product?

- False negatives, like when the weather forecast predicts no rain, but there’s a downpour. What will the outcome be if this happens with your feature?

- Real world risks, like when ML outputs directly influence or impact peoples’ lives, livelihoods, and opportunities. Are these applicable to your product?

New constraints

- User constraints, like incorrect mental models about how the system works, unrealistic expectations or fears of your product, or the chance of complacency over time.

- Technical constraints, like API or storage and compute cost, latency, uptime, data availability, data privacy, and security. These are primarily an issue for your engineers but they can also have a direct impact on the user experience, so you should understand the limitations and possibilities.

3. How will it work when the ML fails?

When, not if. If you are surprised by the ways that your AI product fails in production, you didn’t do enough testing beforehand. Your team should be testing your product and outputs during the entire build process, not waiting until you’re about to ship the feature to customers. Rigorous testing will give you a solid idea of how and when your product might fail, so you can build user experiences to mitigate those failures. Here are some of the ways you can effectively test your product.

Start with your design prototypes

Prototype with real data as much as possible. “Lorem ipsum” is your enemy here – use real examples to stress test your product. For example, when developing our AI chatbot Fin, it was important to test the quality of answers given to real customer questions, using real help center articles as source material.

An example of how two designers might approach designing a chatbot that provides AI-generated answers

In this comparison, we can see that the colorful example on the left is more visually appealing, but gives no details about the quality of the answer-generation experience. It has high visual fidelity but low content fidelity. The example on the right is more informative for testing and validating that the AI responses are actually good quality, because it has high content fidelity.

Designers are often more familiar working along the range of visual fidelity. If you’re designing for ML, you should aim to work along the spectrum of content fidelity until you’ve fully validated that the outputs are of sufficient quality for your users.

The colorful Fin design will not help you judge whether the chatbot can answer questions well enough that customers will pay for it. You will get better feedback by showing customers a prototype, however basic, that shows them real outputs from their actual data.

Test on a large scale

When you think you have achieved consistently good quality outputs, backtest to validate your output quality at a larger scale. This means having your engineers go back and run the algorithm against more historical data where you know or can reliably judge the quality of the output. You should be reviewing the outputs for quality and consistency – and to surface any surprises.

Approach your minimum viable product (MVP) as a test

Your MVP or beta release should help you resolve any remaining questions and find any more potential surprises. Think outside the box for your MVP – you might build it in-product, or it could just be a spreadsheet.

“Make the outputs work, then build the product envelope around it”

For example, if you’re creating a feature that clusters groups of articles into topic areas and then defines the topics, you’ll want to ensure you’ve gotten the clustering right before you build the complete UI. If your clusters are bad, you may need to approach the problem differently, or allow for different interactions to adjust the cluster sizes.

You might want to “build” an MVP which is just a spreadsheet of the outputs and named topics, and see if your customers find value in the way you’ve done it. Make the outputs work, then build the product envelope around it.

Run an A/B test when you launch your MVP

You’ll want to measure the positive or negative impact of your feature. As a designer, you probably won’t be in charge of setting this up, but you should seek to understand the outcomes. Do the metrics indicate that your product is valuable? Are there any confounding factors in the UI or UX that you might need to change based on what you’re seeing?

“You can use telemetry from your product’s usage combined with qualitative user feedback to better understand how your users are interacting with your feature and the value they are deriving from it”

On the Intercom AI team, we run A/B tests whenever we release a new feature with a high enough volume of interactions to determine statistical significance within a few weeks. For some features, though, you just won’t have the volume – in that case, you can use telemetry from your product’s usage combined with qualitative user feedback to better understand how your users are interacting with your feature and the value they are deriving from it.

4. How will humans fit into the system?

There are three major stages of the product usage lifecycle that you should consider when you’re building an AI product:

- Setting up the feature before use. This might include choosing a level of autonomy that the product will operate under, curating and filtering data that will be used for predictions, and setting access controls. An example of this is the SAE International autonomous vehicle automation framework, which outlines what the vehicle can do on its own, and how much human intervention is allowed or required.

- Monitoring the feature while it’s in operation. Does the system need a human to keep it on track while it works? Do you need an approval step to ensure quality? This might mean operational checks, human guidance, or live approvals before an AI output is sent to the end user. An example of this could be an AI article writing assistant, which suggests edits to a draft help article which a writer has to approve before setting them live.

- Evaluating the feature after launch. This usually means reporting, providing or actioning feedback, and managing data shifts over time. At this stage, the user is looking back on how the automated system performed, comparing it to historical data or looking at quality and deciding how to improve it (through model training, data updates, or other methods). An example of this might be a report detailing what questions end users asked your AI chatbot, what the responses were, and suggested changes you can make to improve the chatbot’s answers to future questions.

You can use these three phases to help inform your product development roadmap, too. You could have multiple products and multiple UIs based on the same or very similar backend ML tech, and just change up where the human is involved. Human involvement at different points in the lifecycle can completely change the product proposition.

You can also approach AI product design in terms of time: build something now that might need a human at a certain point, but with a plan to remove them or move them to a different stage once your end users get used to the outputs and quality of the AI feature.

5. How will you build user trust in the system?

When you introduce AI into a product, you’re introducing a model with agency to act in the system, when previously only the users themselves had that agency. That adds risk and uncertainty for your customers. The level of scrutiny that your product receives will understandably increase, and you will need to earn your users’ trust.

You can try to do that in a few ways:

- Offer a “dark launch” or side-by-side experience where customers can compare outputs or see outputs without exposing them to end users. Think of this like a user-facing version of the backtesting that you did earlier in the process – the point here is to give your customers confidence in the range and quality of outputs that your feature or product will deliver. For example, when we launched Intercom’s Fin AI chatbot, we offered a page where customers could upload and test out the bot on their own data.

- Launch the feature under human supervision first. After some time with good performance, your customers will likely trust it to operate without human monitoring.

- Make it easy to turn the feature off if it’s not working. It’s easier for users to adopt an AI feature into their workflow (especially a business workflow) if there’s no risk that they might mess something up and be unable to stop it.

- Build a feedback mechanism so that users can report poor results, and ideally have your system act on those reports to make improvements to the system. However, be sure to set realistic expectations as to when and how the feedback will be actioned so customers don’t expect instant improvements.

- Build robust reporting mechanisms to help your customers understand how the AI is performing and what ROI they’re getting from it.

Depending on your product, you may want to try more than one of these to encourage users to gain experience and feel comfortable with your product.

Patience is a virtue when it comes to AI

I hope these five questions will help guide you as you journey into the new, fast-moving world of AI product development. One final piece of advice: be patient as you launch your product. It can take significant effort to get an ML feature up and running and tuned to the way a company likes to work, and so the adoption curve can look different than you might expect.

“After you’ve built a few AI features you’ll start to get a better sense of how your particular customers will react to new launches”

It’s likely that it will take a bit of time before your customers see the highest value, or before they can convince their stakeholders that the AI is worth the cost and should be launched more broadly to their users.

Even customers that are really excited about your feature might still take time to implement it, either because they need to do prep work like cleaning their data, or because they are working to develop trust before launching it. It might be hard to anticipate what adoption you should expect, but after you’ve built a few AI features you’ll start to get a better sense of how your particular customers will react to new launches.