Building great relationships with your customers and offering them world-class support depends on a lot of things, but one of the most important is the quality of the conversations you have with them.

If those conversations are inconsistent or unsatisfactory, you will struggle to make valuable, long-term relationships with your customers.

To ensure that conversation quality stays high, however, you have to be able to measure the quality, and that can be quite challenging. There are a few common ways to measure it, such as with Customer Satisfaction (CSAT) ratings. Other important ingredients for a quality conversation can be harder to measure, such as the product knowledge and tone of your customer support agents.

Effectively measuring our conversation quality was an issue we faced at Intercom, especially as we scaled up so fast. Our support organization started small, with two or three people working closely together and having good visibility on each others’ conversations. However, as we grew and began supporting customers across different time zones with staff located across different offices, it became a challenge to ensure we were giving consistently excellent support to all of our customers.

“While having more support agents means that there can be a greater variance in conversation quality, you also have more talent and resources on your team”

Whether you are a growing startup or a mature company, maintaining customer conversation quality is likely a pain you have felt. Without some kind of auditing tool, it can be extremely difficult to ensure your support organization is maintaining up to date product knowledge and delivering the correct tone throughout conversations with customers.

Conversation Carousel

A growing support team brings benefits, however – while having more support agents means that there can be a greater variance in conversation quality, you also have more talent and resources on your team to conduct reviews of conversation quality. With more people available to focus on this problem, we were able to develop a peer review system called Conversation Carousel.

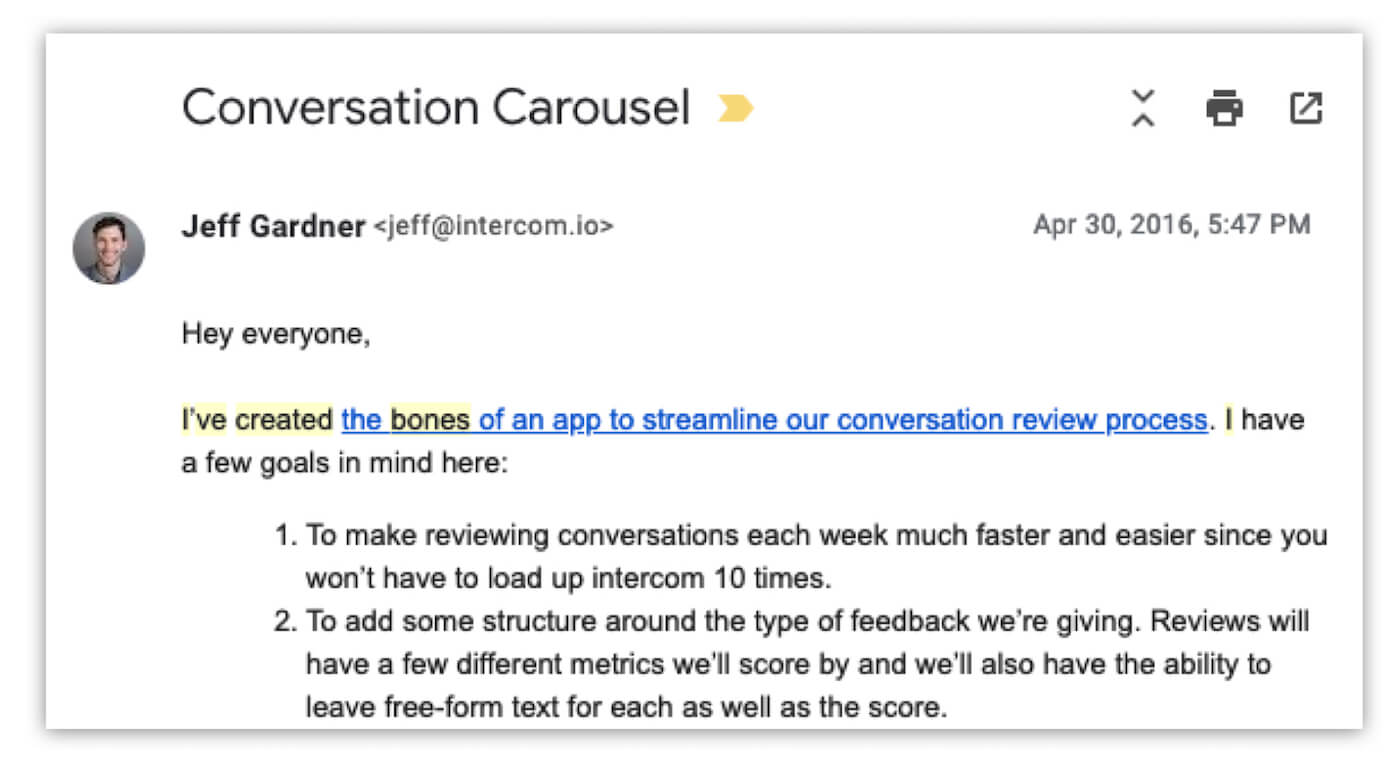

An internal email from Jeff, our then-head of Customer Support and current head of Platform Partnerships, announcing Conversation Carousel

This tool helps with the onboarding of new staff members and identifies those team members who need further guidance. We created an internal Ruby-on-Rails app to review conversations by leveraging our REST API and Sidekiq, a background processor for Ruby.

When a customer support agent logs into the app, they can see reviews left by teammates on their conversations. They can also choose to fetch a number of teammate conversations in order to review them. These are pulled via Sidekiq. To ensure the review process is evenly spread across the team, everyone has to review a certain number of teammates’ conversations every week. By writing some custom logic in the Rails app, conversations aren’t pulled for review if they are too short or if they have been labelled as spam, for example.

Snapshot of Conversation Carousel

Defining conversation criteria

A reviewer then scores a conversation based on a number of criteria. At Intercom we score tone, quality and whether the conversation followed internal processes.

- Quality is a measurement of accuracy and completeness. For simple issues, you should ask yourself: was the correct product functionality conveyed clearly and concisely? For more complex issues: did they treat the problem, not just a symptom? Did they provide the best answer, workaround or options to achieve the desired result?

- Tone is a measurement of empathy for the customer and their perspective and a high degree of professionalism. Did the conversation sound distant and robotic, or did your teammate make a connection with the customer?

- Followed internal processes is a qualifying measurement to confirm whether or not internal processes were followed while a conversation was being handled. Followed internal processes is a Yes or No response, but we measure quality and tone on a spectrum from “Needs Improvement” to “Good” to finally “Exceptional.”

We also include a free form text box to leave comments based on your ratings. This is what a review might look like:

Reviewed by John Smith

Quality: Good

Tone: Good

Followed Internal Processes: Yes

Quality: Simple question with clear answer and screenshot to back up that this feature does in fact exist. Great job.

Tone: Friendly and positive throughout.

Process: Tags look perfect to me.

Reviews bring results

Here at Intercom we’ve seen great results from implementing this peer review system. We have made doing a minimum of five peer reviews a week part of our support team key performance indicators (KPIs). It’s important to note, though, that people’s conversation review results (Needs Improvement, Good, Exceptional) aren’t part of their KPIs – this way the high level stats on an individual’s conversations can be shared with management, without peers directly impacting their colleagues’ KPIs.

Spreading these reviews across the team means that a large volume of conversations can be reviewed every week. While we do have some guidelines on how we talk to our customers, we do give our staff a lot of freedom to add their personality through emojis, gifs and humor. However, we always ask ourselves what is the customer’s problem to be solved and is the conversation getting to the heart of that.

“This method enables us to hold ourselves to the high standard that we set”

This is not only useful for picking up on colleagues who need further guidance in certain areas, but also those members of staff who are excelling at their jobs. Excellent conversations, such as those exemplifying de-escalation or a conversation that completely nails your company tone, can be highlighted, shared or even added to a knowledge base.

What is perhaps most unique and exciting about this method is that it allows our support staff, those actually talking to customers, to set the standard of what a good conversation looks like and to shape how we talk to our customers. It also builds a culture of collective upskilling, as colleagues who excel at certain aspects of their job can showcase their work to those reviewing it. Finally, it enables us to hold ourselves to the high standard that we set.

If you enjoyed this article, check out our book, Intercom on Customer Support.