Customer Satisfaction Score (CSAT) is by far the most popular customer service quality metric used across all industries and teams of all sizes. But CSAT alone is actually a poor indicator of support quality.

This is a guest post from Martin Kõiva, the CEO of Klaus, a conversation review tool for support teams that aims to improve customer service quality by making internal feedback easy and systematic.

In light of recent events, CSAT scores are understandably dipping for teams across the globe. Support leaders shouldn’t immediately press the panic button though.

Letting your customers be the sole judges of what you do neglects your own values and expectations for your support team’s performance. Especially now, with many companies dealing with high support volumes and distressed customers, looking at CSAT alone can quickly lead your team off course.

Successful support teams combine CSAT with another metric, Internal Quality (IQS), to get the fuller picture of their operations.

Let’s explore the shortcomings of CSAT, analyze how IQS can complement it, and see how to get a complete overview of what is really happening in your support interactions.

Why CSAT is not enough

CSAT can be a great customer service metric that helps you keep up with your customers’ expectations. However, more and more support leaders are beginning to understand that CSAT gives only half of the picture and doesn’t always necessarily reflect your support quality.

“CSAT gives only half of the picture and doesn’t always necessarily reflect your support quality”

At this stressful time where many support teams are handling emotional customers and having tough conversations, it’s even more apparent that CSAT doesn’t tell the whole story.

Here’s why CSAT is not enough to draw any finite conclusions on customer service performance:

1. CSAT is a mixture of product, support, and other feedback

It’s difficult to pin down the exact cause for a negative rating by just looking at the score you received.

For example, if you analyze your support team’s performance based on CSAT, then a single customer’s negative feedback on prolonged response times could easily mislead you. Many companies have experienced an increase in their support volumes since the world suddenly shifted to remote work. In this case, CSAT probably didn’t reflect how (well) your agents handled the situation but reflected the customer’s dissatisfaction with having to wait in the queue.

2. Customers don’t see the complex processes behind their requests

Because customers don’t see the whole picture, they may be disappointed about something that was impossible for your team to achieve in the first place.

Not all issues can be solved immediately and some of your users’ requests can never be solved by your team at all. Customers don’t know your product roadmap and internal processes, so bear in mind that some of the negative feedback could be directed at something your team had no control over.

“Because customers don’t see the whole picture, they may be disappointed about something that was impossible for your team to achieve in the first place”

3. Customers don’t know your quality standards

At times, your users might leave positive feedback on support interactions that weren’t actually up to par based on your internal criteria. Other times, your agents will receive negative feedback on things that were out of their control (e.g. issues with the product) though they handled the cases flawlessly.

Every company and customer service team has its own set of goals and standards which might differ from a particular customer’s idea of excellent customer care. So, CSAT is often a reflection of how your standards and performance align with what your customers believe is right, not you.

CSAT does not give answers to everything related to the quality of your customer experience. It’s a great indicator of your users’ attitudes towards what you do but in order to make the most out of this feedback, you need to combine that with Internal Quality Score (IQS).

What’s Internal Quality Score (IQS)?

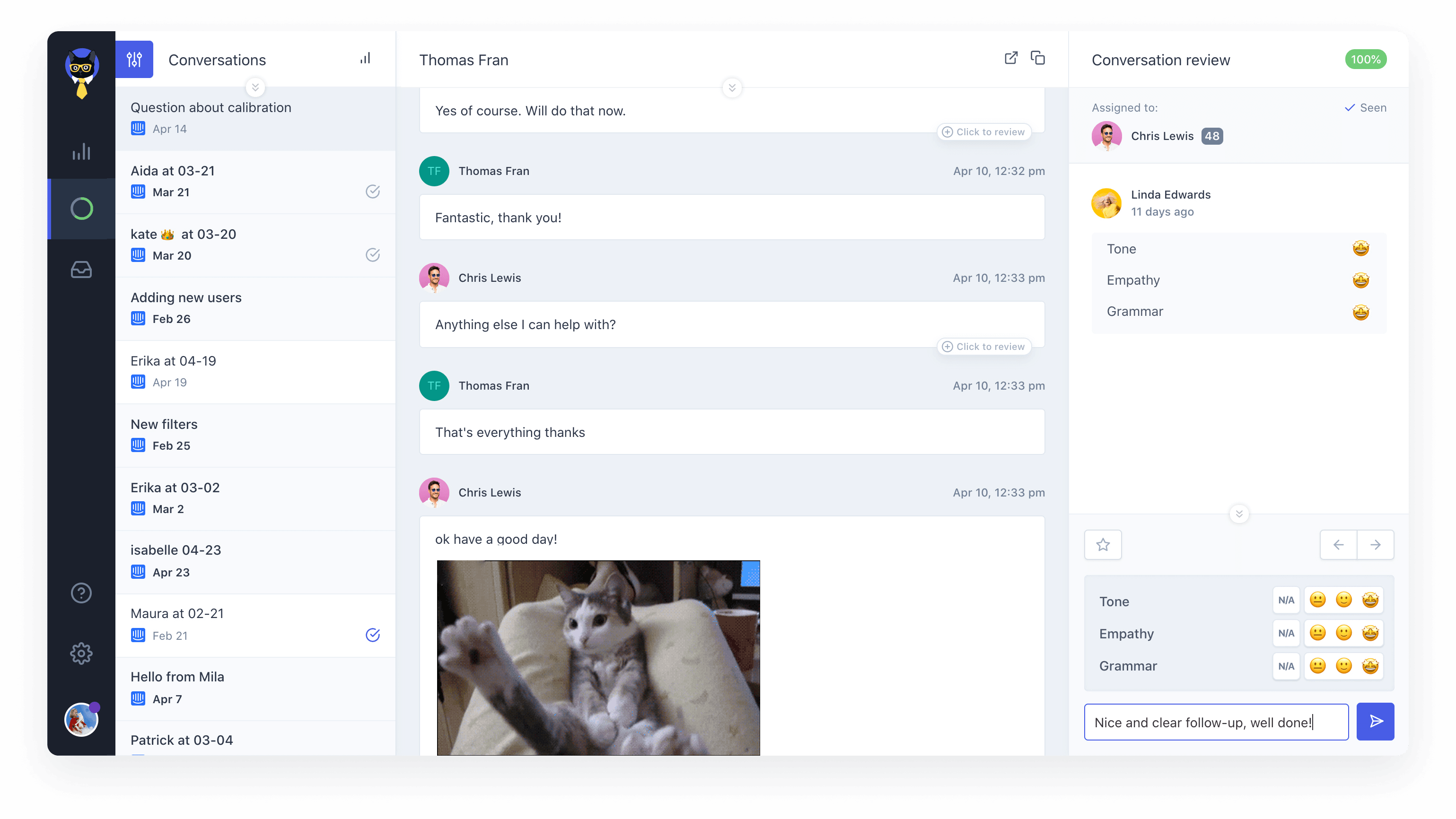

Internal Quality Score is the metric of conversation reviews – internal support interaction evaluations that teams conduct to secure a high level of support quality. IQS tells you how well your agents’ responses align with your internal quality standards. IQS expresses how well your agents met your internal quality standards as a percentage of the maximum score of 100%.

Here’s how to measure and track IQS:

- Build a scorecard that reflects your quality standards. For example, most companies use rating categories like Product Knowledge, Tone/Empathy, and Solution to check how well agents’ responses performed against their internal expectations.

- Choose who should do the reviews. Managers are often responsible for the team’s performance; however, they’re usually also loaded with various other tasks and duties. Peer and self-reviews are a great alternative to manager reviews. Also, dedicated QA specialists are becoming more and more popular among quality-oriented support teams.

- Decide how many conversations to review. Most companies track about 5-10% of their total volume to get a statistically relevant overview of their performance. However, some support teams aim to provide feedback on all interactions during, for example, onboarding or training programs.

- Conduct regular conversation reviews. If you analyze your agents’ interactions on a daily, weekly, or monthly basis, you’ll get a neat overview of how your team is performing at all times.

If you conduct regular conversation reviews, you’ll be able to track your Internal Quality Score over time.

Note that the best part about tracking IQS is that you’ll notice any changes in your support quality as soon as they happen. You’ll be able to correct the course before it’s too late.

How to balance CSAT with IQS

IQS balances your customers’ feedback with your own perspective and helps you understand whether customers’ expectations align with your company goals. At times, you might realize that you need to take your CSAT results with a grain of salt.

Other times, you’ll get valuable insight into why some of your conversations ended with negative reviews. Most importantly, you’ll learn how you can improve and avoid making the same mistakes again in the future.

For example, Automattic, the makers of WordPress.com and WooCommerce, continuously deliver CSAT of 95% and more. Yet, 56.6% of their support interactions fall under the “Room for Improvement” category in conversation reviews.

Here’s how to pair your CSAT up with IQS for a successful support quality program:

1. Analyze all the conversations rated by your customers.

Most teams receive external feedback to a relatively small proportion of their support interactions. The ones that do get scored are usually those that were handled either amazingly well or really bad (according to your customers).

To make the most out of your CSAT in terms of support quality, always review:

- Interactions that received a negative rating to understand whether the feedback was aimed at your support quality or other business areas like product or marketing. Use every negative feedback as a lesson from which you can learn something to improve your service.

- Conversations rated positively to understand what works best with your customers. Try to pin down the activities that drive customer satisfaction, repeat them, and see how it impacts your business results in a positive manner.

2. Review a random sample of your support interactions.

If you focus only on the conversations rated by your customers, you’ll neglect a large part of your support volume that never receives any feedback from your customers. Pick random conversations for review to get a broader overview of your team’s performance. Also, giving regular feedback is crucial for your support agents’ professional growth and motivation. “That’s fine, continue” is great feedback that often goes unsaid.

“If you focus only on the conversations rated by your customers, you’ll neglect a large part of your support volume that never receives any feedback”

3. Compare your CSAT and IQS trends.

Just like with every other business metric, the real value of tracking CSAT and IQS becomes clear if you track them over time. Moreover, if you compare the graphs of those two quality KPIs, you’ll immediately notice when something goes haywire. For example, if you see a sudden drop in CSAT while IQS remains high, it might indicate that other areas besides support are dragging customer satisfaction down. Investigate the latest changes you’ve made to your product or other business activities that might have caused the dissatisfaction, fix it, and see how CSAT and IQS continue to grow hand-in-hand.

IQS is a great customer service metric that helps to balance your customers’ subjective point of view. To unveil the full potential of your customer feedback, you need to combine it with internal reviews that help you make sense of this data and learn from it.

How to track CSAT and IQS on Intercom

Sending out customer surveys and going through support interactions might sound like a real hassle – and it probably will be if you decide to do it manually. Luckily, there are excellent tools out there that will help you collect, analyze, and report CSAT and IQS.

To start tracking CSAT and IQS on Intercom:

- Set up the conversation rating Task Bot. The bot will send the survey to your customers via chat or email and you’ll be able to track the results on your conversation rating summary.

- Connect your Intercom account with a conversation review tool like Klaus. This way you’ll be able to automatically pull in conversations from your Intercom account and review them based on your custom scorecard, notify agents about the feedback they’ve received, and track your IQS over time, agent, and rating category.

With a conversation review tool like Klaus, you can track your Internal Quality Score over time.

CSAT and IQS are a match made in heaven. Without either of them, your customer service feedback will be too biased either towards your users’ expectations or your own standards that might not match those of your customers.

Keeping an eye on both internal and external quality metrics gives you a precise overview of how your team is doing and helps you pinpoint the areas in which you need to improve.