For once, the big debate isn’t about the brand of Post-Its or Sharpies. It’s not personas versus Jobs-to-be-Done. It’s not even about what should be a roadmap and what shouldn’t be. It’s about this idea that there’s a core difference between the output of a product team and the business outcome generated.

I’m joined in episode six of Intercom on Product, as always, by SVP of Product Paul Adams, who spoke about this topic at a recent conference. In that talk, he explored the notion that there’s actually a third component in this equation – and that it’s equally as important as output and outcome. That element? Inputs.

In our chat, we discuss the five inputs that guide us at Intercom; how to think about the relationship between inputs, outputs and outcomes; and how to frame projects as customer problems instead of business problems. You can listen to our full conversation above, or read a transcript of our conversation below.

If you enjoy the conversation and don’t want to miss the rest of the series, you can subscribe on iTunes or Google Podcasts, stream on Spotify or Stitcher, or you can grab the RSS feed in your player of choice.

Des Traynor: Welcome to Intercom on Product. This is our sixth episode, and once again, I’m joined by Mr. Paul Adams. Paul, could you talk to our listeners about what’s going on here?

Paul Adams: Yeah, sure. We often talk about outcomes being more important than output. There’s a lot of energy, like you said, in the industry. So for example, Josh Seiden has written a great book called Outcomes Over Output. The theme of Marty Cagan’s most recent version of Inspired, his classic product management book, is outcomes over output. And output, in my opinion, is shipping: shipping product, shipping things out the door, and outcome is the impact of that. We can get into the specifics of what does impact mean? Is it a business result or whatever, but that’s the distinction between the two.

Des: So it’s like what you shipped versus what happened because of the thing you shipped.

Paul: Yeah, exactly. The return you got on it. And there’s been so much energy over the years, and you can blame or credit whoever you want: lean startup, lean UX, all these movements, outputs.

Des: “Move fast and break things,” all that shit.

“There’s so much emphasis on output that people have stopped thinking or thinking enough about the impact of that output”

Paul: Yeah. Ship, ship, ship, all about shipping. And startups die unless they ship. The startups that ship more often more frequently, faster are the ones who learn faster; they’re the ones who then iterate and thrive.

Des: It’s fair to say we believe all that, as in we’re riddled with those sorts of messages that shipping is the heartbeat, et cetera.

Paul: Absolutely. One of our three principles is “ship to learn.” Another one is “think big, start small,” meaning if you start small, you’ll do it sooner. So we absolutely do believe in those things, but the movement around trying to correct this is that there’s so much emphasis on output that people have stopped thinking or thinking enough about the impact of that output. That’s the gist of it.

Des: So, if this is almost like a pendulum swing back towards an idea of, “Hey, it’s not about putting code live on a server so that people can execute it, it’s actually about generating a business return.” What the hell’s wrong with that? That sounds perfectly correct.

Inputs: a third component in the equation

Paul: Yeah. I think our listeners will realize that our thinking is evolving on this topic, too. Outcomes are certainly something we are discussing a lot internally at Intercom these days, more than we’ve done in the past. The case I was making in the talk I gave a couple of days ago was that it’s less about outcomes over output. Like you said, when I read Josh’s book, I was like, “This all makes sense to me.” I also read our principles about shipping and our obsession with shipping, and Darragh Curran, who runs our engineering team, has a blog post about how shipping is a company’s heartbeat. And I believe all that, too. And so the case I was making simply at the conference was: “It’s not about outcomes, it’s about the system. It’s about outcomes and outputs and their relationship, but a third thing, which is inputs.” The full system I think every single software team has – no matter who you are, how you work, whether you’re waterfall or agile – is inputs. Meaning, what projects do you do or what –

Des: Are they the inputs?

Paul: Well, the inputs tell you what projects to do. So the inputs could be a vision or a feature request or a customer complaint or whatever. So you have all these inputs, and then those inputs determine what projects you do.

Des: Inputs to what? Inputs to the product team?

“The product should change, and then that change in the product should result in a change in customer behavior”

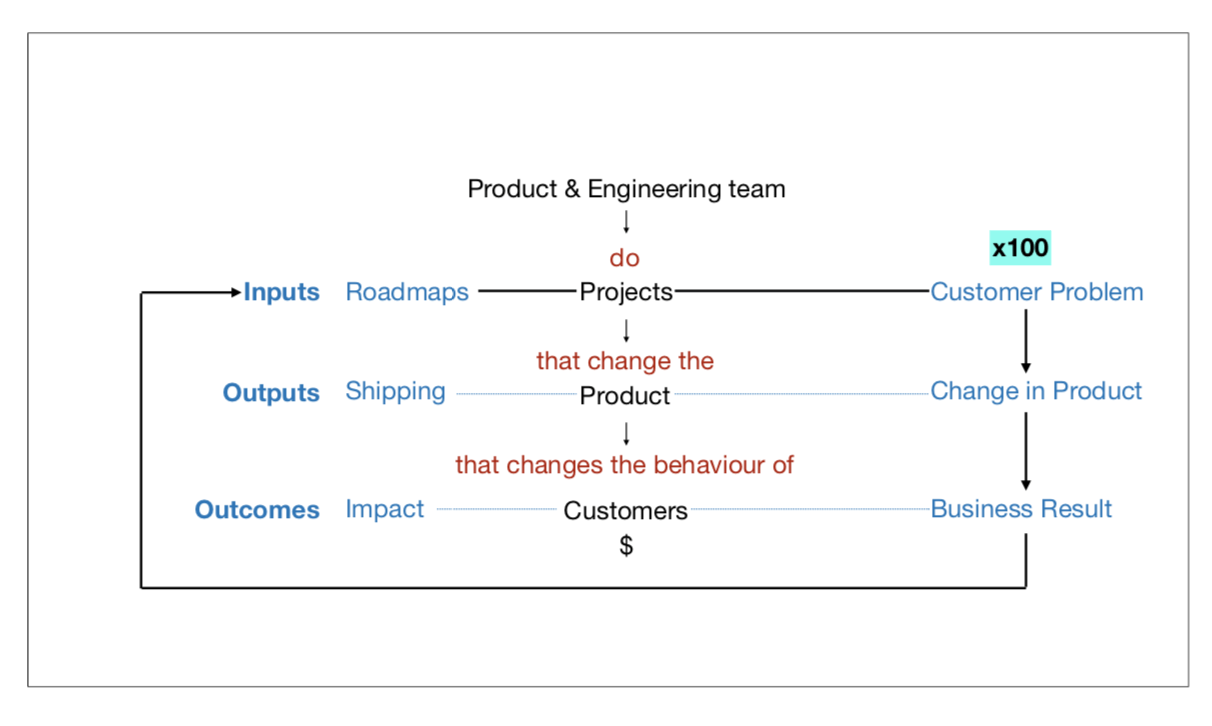

Paul: Yeah, inputs to the product engineering team. I actually had a diagram, which is hard to describe on a podcast, but the first thing going on the diagram was the product engineering team at the top, and then they do projects, which is the next level down. The projects change the product. That’s the whole point of doing a project in a software team. The product should change, and then that change in the product should result in a change in customer behavior. If we added a feature, we expect customers to use it, or we changed how this feature works. We therefore expect customers and users to change how they use the thing. So it’s projects that change the product that change behavior. I didn’t get much into the monetization of that, which is a whole other thing, but you would assume that if you’re running a good business, the changes you make in the product and those consequent changes in user behavior result in revenue.

Des: Either more customers or less churn or more people buying more or whatever, right?

Paul: Exactly. I had existing users broadening or deepening their usage, or new customers signing up, buying something for the first time, et cetera.

Des: So you’re arguing, it sounds like, that inputs, outputs, and outcomes are all very important.

Paul: Yeah, I said that it’s the system that matters, they’re equally important, and we shouldn’t be debating one as more important than the other. We should be saying, “They’re all important, and let’s understand the relationship between them and how to get good at all three.” The inputs are the projects that you do.

Des: The inputs get digested down into a roadmap. Right?

Paul: Right.

Des: Maybe we’ll just talk about the inputs for a little bit. Jeff Bezos has that famous quote, “The thing you can control most – that makes your business more successful – is actually your inputs.” So what is it you actually follow? What would an example of inputs be for a product team? Obviously, there’s a process by which they spit out a roadmap on the statement of what we actually want to do, but what do we listen to, for example?

Paul: It’s interesting to look at the history of Intercom over the last few years, because we’ve obsessed about inputs. And if outcomes, to us, is a more recent conversation, inputs isn’t. It’s a longstanding obsession. And we now have a pretty advanced subsystem within the broader system of inputs, outputs, and outcomes. We have a little system around inputs. So what we do – and again, this is not for everyone, different companies will have different things – but we typically have five inputs.

- One is our vision, our mission of the company, our vision for the future.

- Two is business strategy.

- Three, then, is around our business goals. Do we have revenue targets this quarter? Do we have engagement targets this quarter? Things like that.

- Four is around prospective customers’ feedback. Our sales team talks to customers all the time, every day of the week. They’re learning a lot of things about Intercom. There are things we can build and change in the product that would help those customers sign up for Intercom.

- Five is existing customer feedback. If prospective customers are talking to the sales team, our existing customers are typically talking to our support team. And we put a little process in both of those orgs, where we have a collaboration with the product team to get all the feedback and aggregate it and come up with a somewhat scientific way of organizing it and prioritizing it.

So they’re all inputting, and then we work out ways to balance them: is this next roadmap more about prospective customers or existing? Is it more about this business goal versus strategy? Do we feel like we’re a little bit behind and our mission and vision, one investment more at this time? So they’re all the inputs.

How to evaluate input quality

Des: And when you think about the quality of an input, what are you looking for? Or if I transplanted you into a different company, how would you go about checking if the inputs are any good? It is a good question, isn’t it?

“The way that I think about inputs is that they’re customer problems, ultimately”

Paul: It’s a hard question. Well, I’ll try to answer it. The first thing I’d do if I was transplanted into a new company is understand their system. But the way I think about inputs – the artifact might be roadmaps – but the way that I think about them is that they’re customer problems, ultimately. One thing I’d look for straightaway is the quality of these inputs. Are they good inputs? And then a second thing is how are they communicated? Are they communicated as a customer problem (i.e., there’s value to be had there for customers and for the company) versus a business problem? I’ll give you an example of where we got that wrong in a second.

On the inputs themselves, I’d look for the process around which some of these things are generated. Here’s a really simple example. After I gave that talk, afterwards people would come up to me and ask me a bunch of questions. One of the most common questions I got was, “How do you persuade your sales team?” And I was like: “When our sales team are on a call, and they’re chatting to a prospective customer or an existing customer about a new product we’ve launched, they record all that in Salesforce. And then we pull all that data out of Salesforce into our own system and run analysis on it and aggregate it and code it by different dimensions.”

“You have to share back what you’re doing and why their input mattered. Otherwise, if you’re on the sales side, you feel you’re shouting into a vacuum”

And they were like: “Wow. How do you get your sales team do all the manual work? Our sales team are just closing deals. That’s all they think about. They don’t think about the product.” And I said: “The answer is twofold. One, the sales team we hire are very product-y. We actually hire people who have a passion for product, and it’s one of the values in the sales team to be passionate about our product. So they’re motivated to do it because there’s an interest there. The second reason, which is way more important, is that we ship a lot, which is getting into the outputs actually, but we ship so much. So the sales team can actually see in a very short space of time the direct line between that manual data entry into Salesforce and the thing that their prospective customer is looking for.”

Des: The feedback loop to Sales has to be strong to maintain that. They have to believe, “If I make enough noise about this gap, I will get that gap closed, and I’ll close that deal, and I’ll make more money.” And that’s why I think one of the imperatives, for anyone who’s trying to adopt some sort of feedback loop with Sales, is that you have to share back what you’re doing and why their input mattered. Otherwise, if you’re on the sales side, you feel you’re shouting into a vacuum.

Paul: Yeah, exactly. And to try and answer your original question, they’ll just put rubbish into Salesforce or not bother.

Des: But the other piece I find is that I don’t like inputs that are super dynamic, if you know what I mean. They get changed quarter to quarter or week to week. It can’t be like, “The number-one feature we need is a Zuora integration.” Next month it’s a Microsoft Dynamics integration. Next month it’s tagging. You’re like, “Well, hang on.” Generally speaking, customer needs tend to not change that often. So I feel like there’s a massive frequency bias whenever I see these things change.

It’s good to look at both the rate of change within the top 10 or whatever, but also that’s one thing that just helps you sanity-check the input. Also the recidivism. If we get something off the list, and it comes back again, that means we’re not properly understanding the feature. So like: ”You asked for permissions. Why is permissions back again for the third quarter, even though we’ve said all three times we got it done?” Well, it’s clear that we’re doing an MVP on this or something like that, and we’re not getting away with it, you know?

Paul: Yeah. We scoped too small.

How to think about outputs

Des: Yeah, totally. All right, so that’s inputs. Outputs. How do you think about outputs? Is it like how many features are launched to the public?

Paul: Yeah, I think at the highest level it’s shipped product changes. So going back to the project engineering team that does these projects, they’re looking at customer problems based on these great inputs, you’d hope. And then that changes the product. And sometimes these things are in the back end, obviously. It’s security improvements or whatever, it’s not always visible, but they’re changes to the product. And so it is things you ship. And now of course any of these dimensions, they can be good or bad. You can have bad inputs or good inputs; you can have bad output and good output.

Des: Yeah, and that’s just a function of the team, right? Or depressed scoping or priority or design or whatever, but if you have a bad output, you don’t get to blame: “We were asked to do the wrong thing and we did the wrong thing. Someone asked us for bullshit, so we did some bullshit.” Or it can be like, “Our team really honed it in, and we produced half of the thing we said we’d do,” or whatever, right?

Paul: Exactly. All three of these are levers. And outputs to me is actually very simple. If one of these three is more important than the other, I think I’d argue that it’s inputs, because I had a slide in the conference that basically said, “Shit in = shit out,” which means it starts and ends with the inputs in a way. With outputs, I think it’s pretty simple, which is the obsession with shipping that we’ve had over the past few years is a good thing. And like we said earlier, we do obsess about it, and we continue to obsess about it. One of the conversations we come back to a lot at Intercom is, as we grow and scale and get more product teams and more engineers and our code base gets more complicated or bigger, can we still ship as fast as we used to? And that’s testament, I think, to our obsession with shipping. Having said that, within that you then have variables below that like scoping. Do we scope the problems to just the right amounts? Do we solve the problem without doing extra work? Speed: how fast are we? Have we compromised the quality? But at the end of the day, I think it’s pretty simple: ship product changes.

Feature factories: good or bad?

Des: Yeah, totally. And so inputs and outputs are pretty clean. With outcomes, one thing that occurs to me is that I often grimace when I see any team celebrate an outcome but they can’t actually articulate why it happened, if you know what I mean. Here’s an example. A large customer could sign for Intercom tomorrow – a very large customer, say a seven figure deal. And they could buy one of our products, let’s say a product that might’ve had a million-dollar goal for the quarter. And with those results, our product team could blow through their target. The outcome from that team would look phenomenal for our quarter, but ultimately they had nothing to do with that.

“You’re at your best when you’re celebrating repeatable victories”

Obviously they built the product, but in this period of analysis, the business outcome arrived independent of what we were doing in that exact quarter. And the reason I care about those things is because I think you’re at your best when you’re celebrating repeatable victories. You know what I mean? Things where you’re like: “I know exactly why we laid this out. The strategy worked. We got exactly what we planned to get, and that’s awesome.” But there are loads of things that produce outcomes, right? And we can have a really successful marketing campaign and see signups across the board. It doesn’t mean everyone’s product got better. So, talk to me about how you chase the path from an output to the outcome without getting blinded by other rising tides that might’ve helped us along the way.

Paul: I think this is really important, teasing apart cause and effect, what caused what. Before we get into it actually, while you were speaking there, it jogged my memory on something you said to me last week about feature factories, because you were asking how we have a repeatable, consistent, high-quality output. And I remember you saying to me that factories are a brilliant way to have consistent high quality. And the feature factory thing was given a very hard time.

Des: So, I hate this phrase. This is one of these things where, if there weren’t the alliteration in this, no one would be talking about it. But now it’s, “Oh, let’s look at feature factory.” I know. I’ve heard it from even PMs in Intercom, “Oh, this is going to turn into a bit of a feature factory.” And I don’t know why that’s necessarily a bad thing. You realize money is made in a mint where you have a repeatable process for making money, right? Same with cars. Same with iPhones. We’re all carrying around iPhone X Pros or whatever. They came out of a factory that needed to produce a lot of them.

I think the implication of feature factory is we’re spitting out random shit left, right and center, but there’s nothing really cohesive to hold it all together. Or it might be like biting your lip on the idea that, “We made five decisions at once, and we have to get all five live, and now we just need to go into factory mode,” versus shipping one, seeing what happens, shipping a second and seeing what happens and charting your course that way. But I think factories are awesome things to be able to create. A lot of incumbents in the world would wish to god they had a factory, when actually what they have is an artisanal mill for producing one thing every now and then. But to be able to produce lots of stuff at some degree of scale, whether it’s improvements or closing bugs, or speed enhancements, or new functionality, is powerful.

I think it sounds negative, but if you believed that the features you’re shipping produce value, as your system outlined earlier, then you could rename a feature factory as a value factory. And all of a sudden, I’m buying 10 of them. Maybe it’s a feature factory when you’re doing random shit, and you don’t know why you’re doing it, there’s nothing holistic that groups it all together. There’s no strategy that binds it all.

And in true factory sense, it goes out the door, the mechanical door shuts behind it, and you’ve no clue what happened afterwards. And your job is just to get that stuff out the door, and later on someone will come down from on high and tell you your sales are up or whatever. If you don’t have the proper feedback loops, then maybe that’s a shit experience. But I think factory isn’t the bad word here, in my opinion. It’s depending on how you believe it. I do think any good software company needs to be able to get a lot of work done in a short space of time. That’s how you do it.

Paul: Yeah, absolutely. It reminds me of a couple of things. One is that, when I was doing this talk, one of the things I kept emphasizing for people was that the rapid pace of technology means that no matter where you are, whether you’re in a blue-ocean environment, you won’t be for long, because people see your success and copy you and try and emulate you. Or you’re in a red-ocean situation, because the competition is fierce. And in any of those situations, the competition is so fierce that you need to obsess about this system, and you need to try and build a factory. The other thing that comes to mind is the word “machine,” which is another word that’s like factory. There’s something dehumanizing about it. I don’t want to be a machine in a factory, which is just a whole other conversation.

“Outputs are the changes in the product, and the outcomes are the business results”

I’d hope any company who is scaling is trying to say, “We’re trying to build a machine.” And it may be starting to answer your other question. If you’re a well-run business, you want to build a sales machine, you want to build a marketing machine, a product shipping machine. And these are really good things. And to try to actually answer your question about outputs and outcomes, the outputs are the changes in the product, and the outcomes are the business results.

To go back fully, you have the customer problem (which is the input), changes in the product (which is the output), and then the businesses results being the outcome. You need all three. When you start changing the product, you should have an intended business outcome.

Des: That’s how you know you can take credit for it if it happens, basically.

Paul: Yeah, and that should be a machine. This should be a repeatable factory. It should be a repeatable motion. You can put things into the system and get very predictable results.

Reframe business problems as customer problems

Des: Let’s just run through a couple of examples here. We have seen the damage of having an outcome-only approach to things, and for our listeners, this might be that you were told that we need to improve onboarding or activation or your project management tool. Or: “Hey, no one’s using our files feature. Get everyone using our files feature.” The way it might be stated is that our files usage is at one percent, and we need to get into 10 percent. And you take that as the goal of the project. We’ve seen that go askew or be really problematic to work with. Talk us through some examples here.

Paul: I’ve got one great example from a few months ago here. Remember, we obsessed with the inputs, not obsessed with outputs. And historically, we’ve made many mistakes and had our ups and downs but have been relatively very successful at shipping good product that the customers value and buy. But I remember a few years ago, I was on a panel at a conference, and someone from another really respectable, good tech company was saying that their projects are metrics. All their projects are to drive up metric X or drive down metric Y. And at the time I was like, “Oh my God, those sound like terrible projects.” And then with this outcomes-over-output thing, I had a mini identity crisis. I’m like, “Oh, maybe I don’t get it. Maybe we need those types of projects too, and maybe like many things in life, the older you get, you realize the less you know. But I’m not sure if I’d say I’m full-circle back to the idea that a metrics project is bad, but when we’ve tried them, we’ve struggled.

Here’s one example. We charge for Intercom. One way we charge for Intercom is by inbox seats. In other words, if you’re a customer support team, and you use our inbox, each support agent buys a seat. So if you want to add a new customer support person, you need an extra seat.

Des: It’s surprising it’s so simple, isn’t it?

Paul: Oh, here, you’re going to need a podcast for that one: “Intercom on Pricing.”

Des: Yeah.

Paul: So it’s pretty simple. Obviously, more seats equals more revenue for Intercom. And we had a loose theory that “we don’t think all the people who will be using seats are using seats, and actually they should add lots of their colleagues, and they’d see value in Intercom too.” Kind of a loosey-goosey theory. And so we went off on this endeavor, and the project was called “Increase inbox seats.”

So the input was business-focused, not customer-focused: “We could increase our revenue in this corner by increasing inbox seats.” And the project went around in circles. We never got past the project brief stage, because the PM team, to their credit, asked, “What’s the customer problem we’re solving?” Like, “I can’t design this thing until you tell me, or until we realize what customer problem we’re solving, what value that customer will get.”

Des: Right, so if I play that back to you, if they didn’t ask that question, the obvious thing I’d say most people’s mind would go to would be a load of models saying, “Please add some more seats.” Like this reductive sort of: “We just need to tell people to add seats. Done. Next problem.”

Paul: Yep. Or another one is, “Redesign the paywall.”

Des: A/B test the paywall. And maybe a small degree sharper might be to look at the difficulty of adding some bodies to the inbox and maybe making it easier to add or something like that, right? Or maybe at various inferences. But where it sounds like they ended up was, “How do we make it more valuable for more people to have an inbox seat?” That’s actually the question, because if it is valuable, people will do it. If we make the product more valuable to use, engagement in the product should increase.

Paul: Right.

Des: And if you only think in the world of triggering a lot of popups and paywalls, you’re hacking the engagement function independent of the value function. Sometimes that’s not a bad idea. Sometimes it’s like, “Hey, people just didn’t know that we had this feature, so we need to tell them.” In that world, engagement is your problem, not the product. But I think what you’re saying is that oftentimes when you’re trying to look for something as deep-rooted as an actual revenue-boosting feature, you need to actually first look at value. If you increase value on your monetization function isn’t broken, you’ll increase revenue, and so that’s how you play it back.

“If your project is framed as a business problem, can you easily translate that into a customer problem?”

But there’s a bigger piece there you said, which is that your roadmap should be full of customer problems, not business problems, and every business problem – unless it’s something like fixing this bug that’s like stopping us from charging or whatever – should be frameable as a customer problem. So it’s not make people add more seats; it’s demonstrate the value, or increase the value of having more of your team collaborate in the inbox. And if you do that, then maybe you start seeing the right types of features or the right types of improvements come out.

Paul: Right, exactly. And that, just to repeat back what you said, that’s the test. It’s an exercise I think anyone listening can try. Look at your project, look at how it’s framed, and if it is framed as a business problem, can you easily translate that into a customer problem?

Des: And it should be like relatively one-to-one. It shouldn’t require a great degree of imagination or logical gymnastics to say, “I don’t know, we think if this happens, then that happens, blah blah.”

The swinging pendulum

Des: Okay. We’re hitting up on time. I want to ask you one more thing. Within the PM community over the past seven years or so, we’ve seen all these pendulum swings where once there was personas, there are now jobs that need it, did we do it. It seems like we’ve also moved from like ship ship, ship, ship to outcome, outcome, outcome.

And there are probably four or five others that I’ve forgotten about, whether it’s springs and squads or Agile or Kanban, or you name it. All sorts of stuff like that. What, in your opinion, should the PM community be thinking about and talking about to avoid these sort of frequent undulations of: “It’s X. No, it’s Y. Actually the truth is somewhere in between. It turns out both are important.”

Paul: Yeah, there are a couple of things. One is, back to what I said earlier, the less religious you are about things, the more open minded you can be to question something like:”Why do people have metrics projects? Let me understand that first.” All these things matter to some degree: the inputs and the outputs and the outcomes. And it’s a system. I don’t think enough people in general building software (but PMs in particular) think about systems. Engineers might think about it a bit more because –

Des: It’s inherent in their domains.

Paul: They’re building the things, right. But even then, they may not think about the system as a business system. It’s a very people-oriented system, if you think about it. All the inputs are people talking to people. So the first thing and the most important thing by far is to think about this system as a whole. My Spidey senses go anytime I see someone advocating for one part of the system being more important than the other parts. That’s where I think you see these pendulum swings, where people are like, “Oh, it’s not about the outputs. It’s all about the outcomes.” And lo and behold, two years later it’s like, “Oh, we all forgot to ship.” So it’s the system, and all three, inputs, outputs, outcomes.

“Ultimately, all three variables are independent. You can have amazing outputs and amazing intended outcomes. But if the inputs are bad… it doesn’t matter.”

And the second thing that is still quite surprising to me, honestly, is there’s very little talk about inputs, relatively speaking. If you look at all the energy that’s put into outputs – think of the million ways you can do scrum, the bajllion conferences on Agile. You’re inundated. Now it’s all about outcomes. And actually, I’m still waiting for the books about inputs, the energy about inputs.

Des: How to actually get the right sources of information to make the decisions that are best for your company.

Paul: Yeah. And it’s back to the shit in, shit out thing. Apologies, but that’s what it’s about. Ultimately, all three variables are independent. You can have amazing outputs and amazing intended outcomes. But if the inputs are bad –

Des: It doesn’t matter.

Paul: You’ve got the wrong kind of outcomes. The niche part of your business, or the niche part of your product, is amazing. And all the core is terrible.

Des: So for our listeners who probably are working in product orgs, can they draw a system diagram of what comes into them? What’s your decision-making logic or calculus? And then what’s the actual? How do the outputs happen? And then how do we chase the output through to the outcome? So that we can actually know who and how we listen to things. The degree to which you can do that is the degree to which you’re in control of your organization and thus probably your company, in a sense.

Paul: One thing we didn’t, we didn’t fully mention is that there’s an arrow coming out of business results going back into inputs, which is more or less what you’re saying.

Des: Yeah. It’s a self-effecting system, for sure.

Paul: And that’s really important, too. One other thing that comes to mind, is an anecdote for people listening that might be helpful. Last Monday night I gave this talk, and the same questions came up, which was: what’s my role, and what’s your role? Des and the co-founders, what do they do? Paul, you run the product, and what do you do? I always explain to people, and they’re amazed by this, that our job is designing the system. And here’s what’s not an input: Des’s idea, Paul’s idea. That is not how we run the company at all. And I know that some people do struggle with those dynamics. So back to the idea that it’s the system that matters, and if you’re going to rectify one system at the start, get to the inputs and fix them.

Des: Absolutely. What I look at is the inputs, and I think: “Why am I having this idea, and no one else is? Maybe our system is broken, or maybe actually it’s being traded off against something that’s way more important that I don’t know about.” If you share all the context you believe everyone’s seeing and looking at the same data, and they came to a totally different conclusion, it’s probably that I’m more out of touch. (I’m not that out of touch, I swear.)

Paul: No, no. Des is not out of touch.

Des: With that somber note, we will end Intercom on Product, Episode Six. Paul, thank you so much for your time.

Paul: Likewise, Des. Been a pleasure, always.