Ship outcomes, not just features, with the Product Impact Framework

Main illustration: Nadine Redlich

Our industry is in the midst of a big philosophical debate about the fundamental way of thinking about how we build our products, with the focus shifting from the outputs of what we build to the business outcomes generated by those outputs.

We’ve been thinking deeply about how to make this change in our own organization, with Des and Paul leading our discussions about it. More broadly, industry thinkers such as John Cutler and Josh Seiden are writing extensively about how organizations can effect such change in their mindset.

In some ways, this discussion is more relevant to product teams, and product-led companies, than for growth teams, who are by definition focused on outcomes. But the fact that it’s a discussion at all highlights how little we actually know about the relationship between outputs and outcomes, how the causal relationship between product and profit, so to speak, can be so ambiguous.

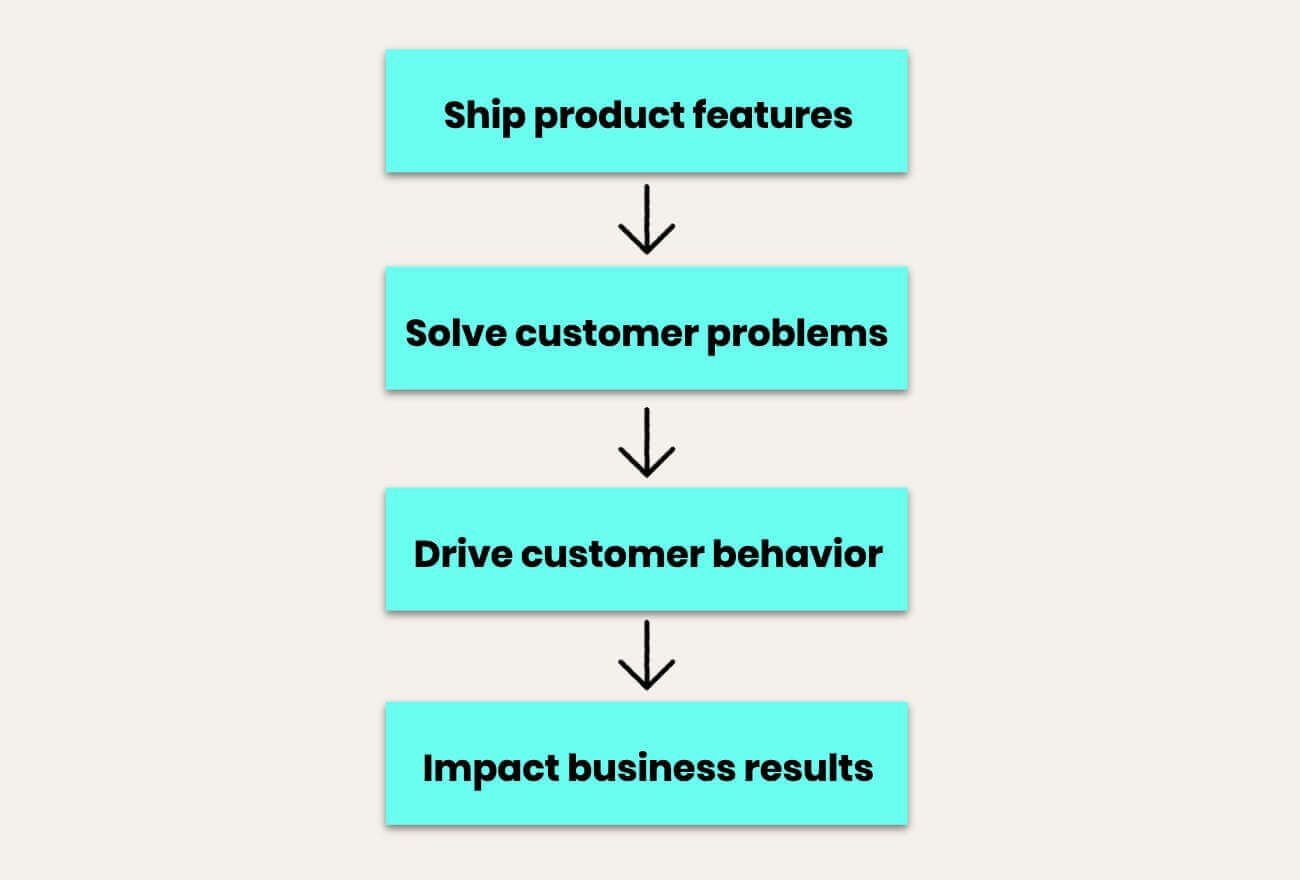

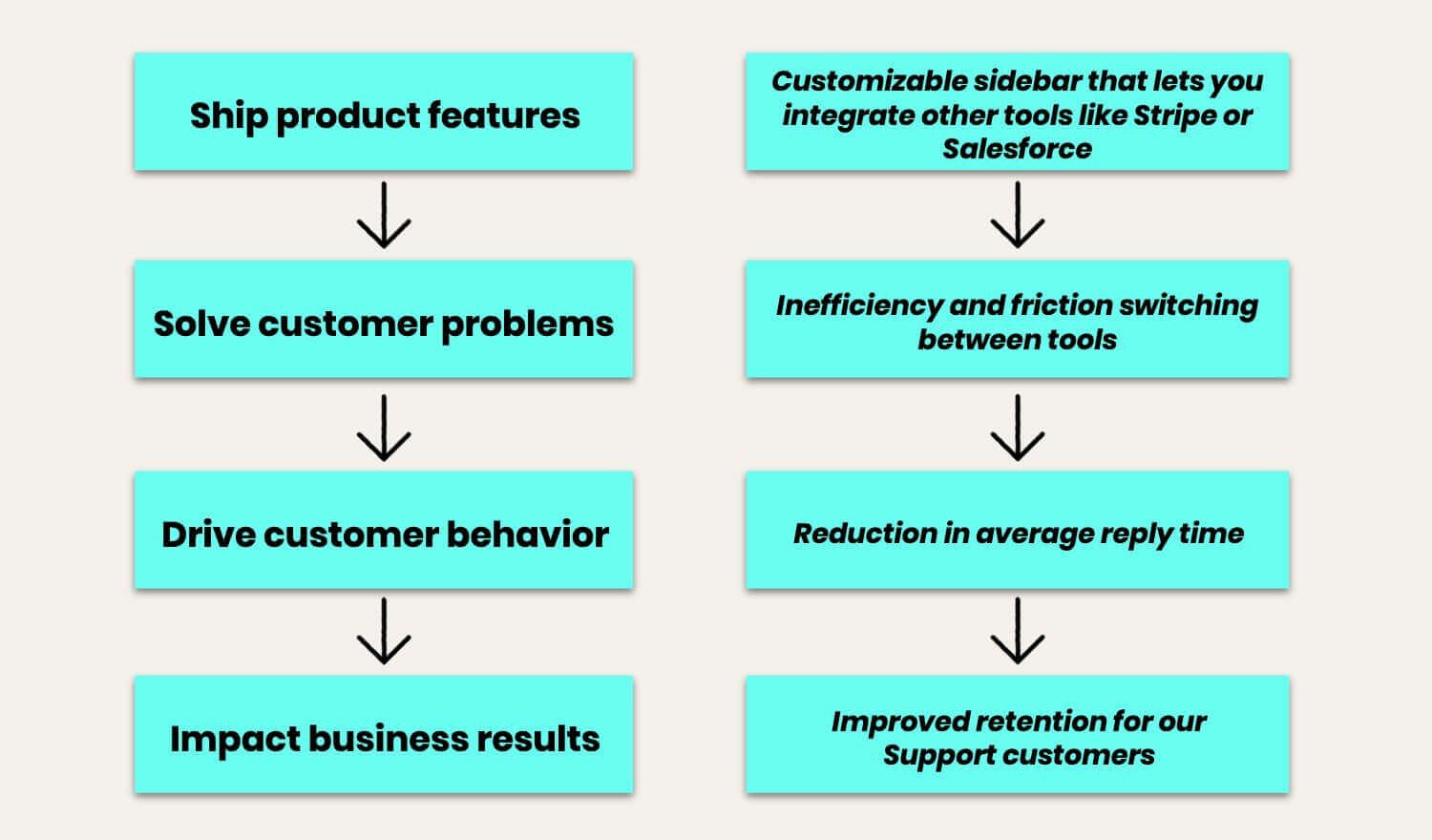

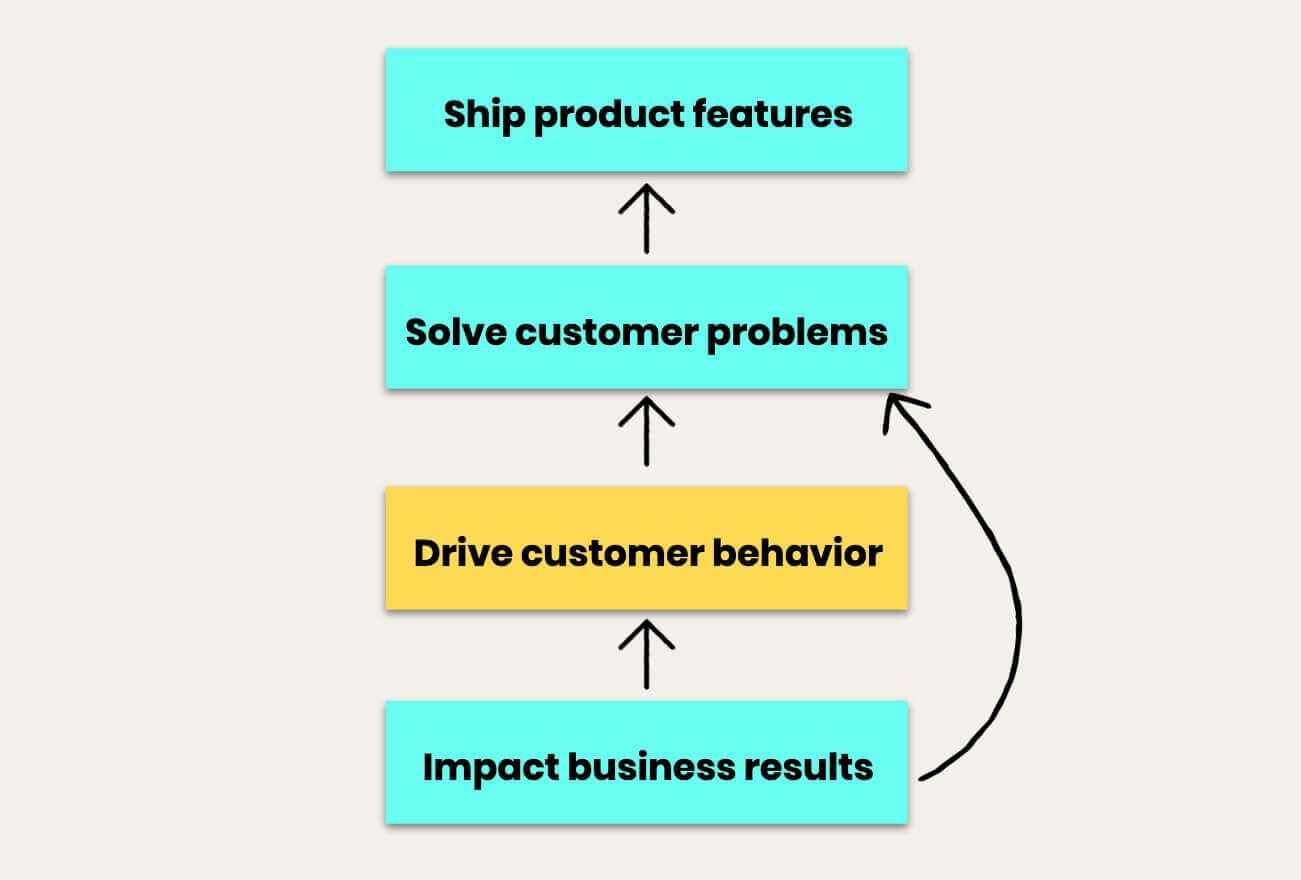

To fully understand what such a change in thinking requires, we’ve realized that it’s important to understand what we call the “product impact framework” – the steps of cause and effect that culminate in positive outcomes for the business.

Essentially, any project you work on results in the shipping of a change to the product (usually but not necessarily a feature). This feature, if it solves a customer problem, results in measurable changes in how customers behave in your product, and ultimately this behavior change delivers an impact to your business.

But knowing how these steps work is not the same as making it happen, and we think we know why.

Context: where we’ve come from in trying to move to outcomes

First some context. We’ve anchored a major part of our R&D culture around the speed of shipping. Back in 2013, our head of engineering Darragh coined this mantra: Shipping is our heartbeat.

“When you’re in the startup phase of a company, speed of shipping, and shipping uncomfortably early so you can learn what actually works, is essential for success”

Later that same year, our head of product Paul wrote a blog post about shipping just being the start of a process, which we eventually refined into a core R&D principle of “Ship to Learn.”

When you’re in the startup phase of a company, speed of shipping, and shipping uncomfortably early so you can learn what actually works, is essential for success.

It also allowed for us to begin with features, and then quickly see which ones had an impact and which ones didn’t. That speed of iteration came naturally to us, and was very fruitful. The Product Impact Framework felt like a natural progression from features to outcome.

But we didn’t necessarily have full control of those outcomes, nor were we entirely able to predict them. As we scaled, that had to change.

Getting our teams to think more commercially

In 2018, our co-founder Des adopted a new internal mantra: “It’s all about market impact.”

“The gap from shipping an improvement to measuring its impact on your key business metrics is, for most product teams, as wide as an ocean”

We needed our R&D teams thinking much more commercially. It wasn’t enough to efficiently solve customer problems; we needed to be thinking about how we were helping our business to be more successful.

The core problem here is the gap from shipping an improvement to measuring its impact on your key business metrics is, for most product teams, as wide as an ocean. The relationship between steps in the Product Impact Framework might seem clear in theory, but they can be hard to decipher in practice.

The Product Impact Framework at work

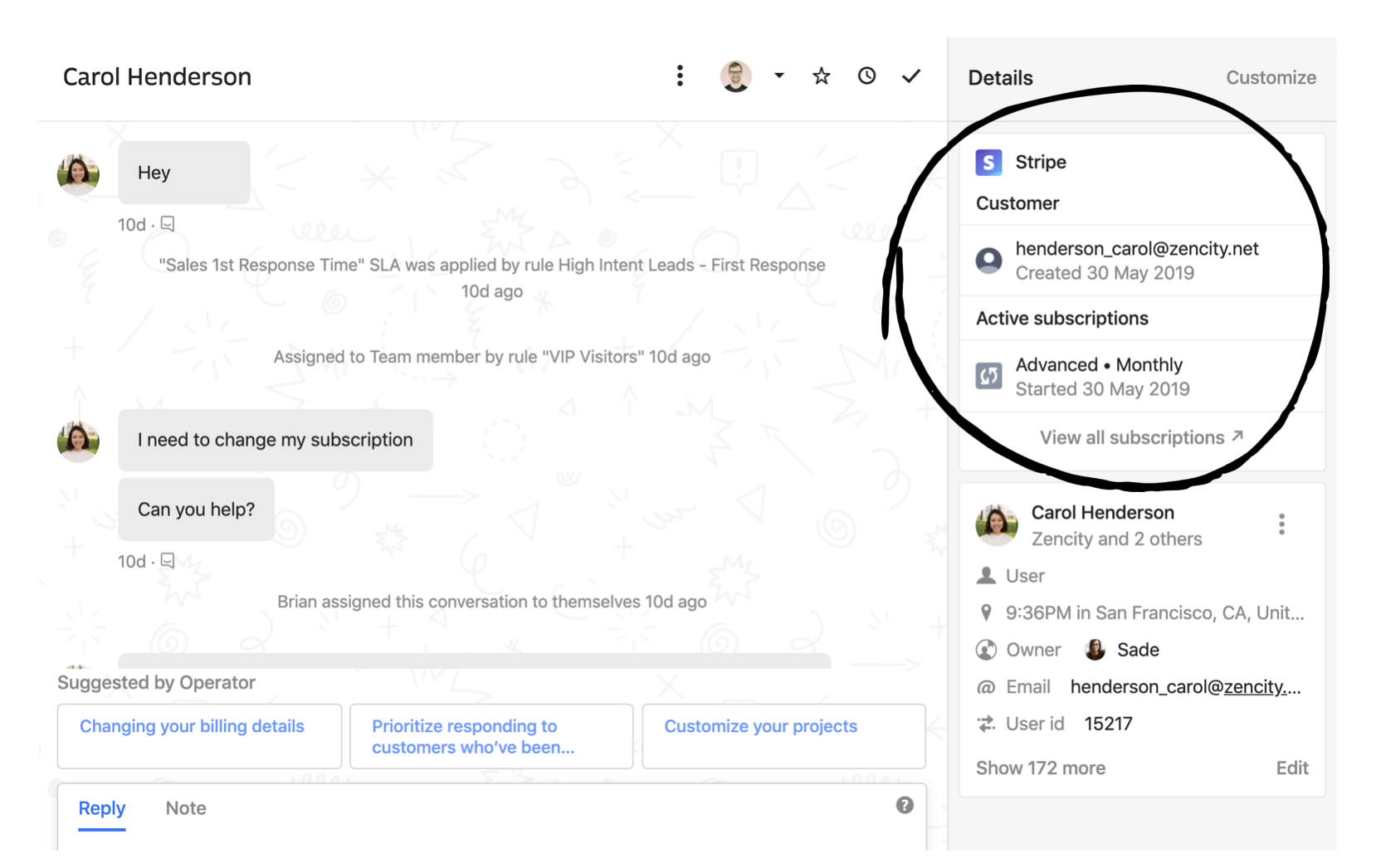

This conundrum is clear if we examine a recent example, and how it maps to the Product Impact Framework. Earlier this summer we released Inbox apps, which allows Intercom customers to customize their Intercom Inbox by arranging their tools and the information they see about their customers.

Here’s how this feature fits into the framework:

Simple enough, right? Perhaps so, but the relationship between those final two steps, from reduction in average reply time to higher support retention, is not trivial to demonstrate. How confidently can we predict the increase in retention, for instance?

As we move to a world that is focused on outcomes, it can be very tempting to look at the framework and reverse it – that is, instead of beginning with features, as we traditionally did in our fast-shipping, fast-iterating world, we simply begin with the outcomes we want to see, and work backwards.

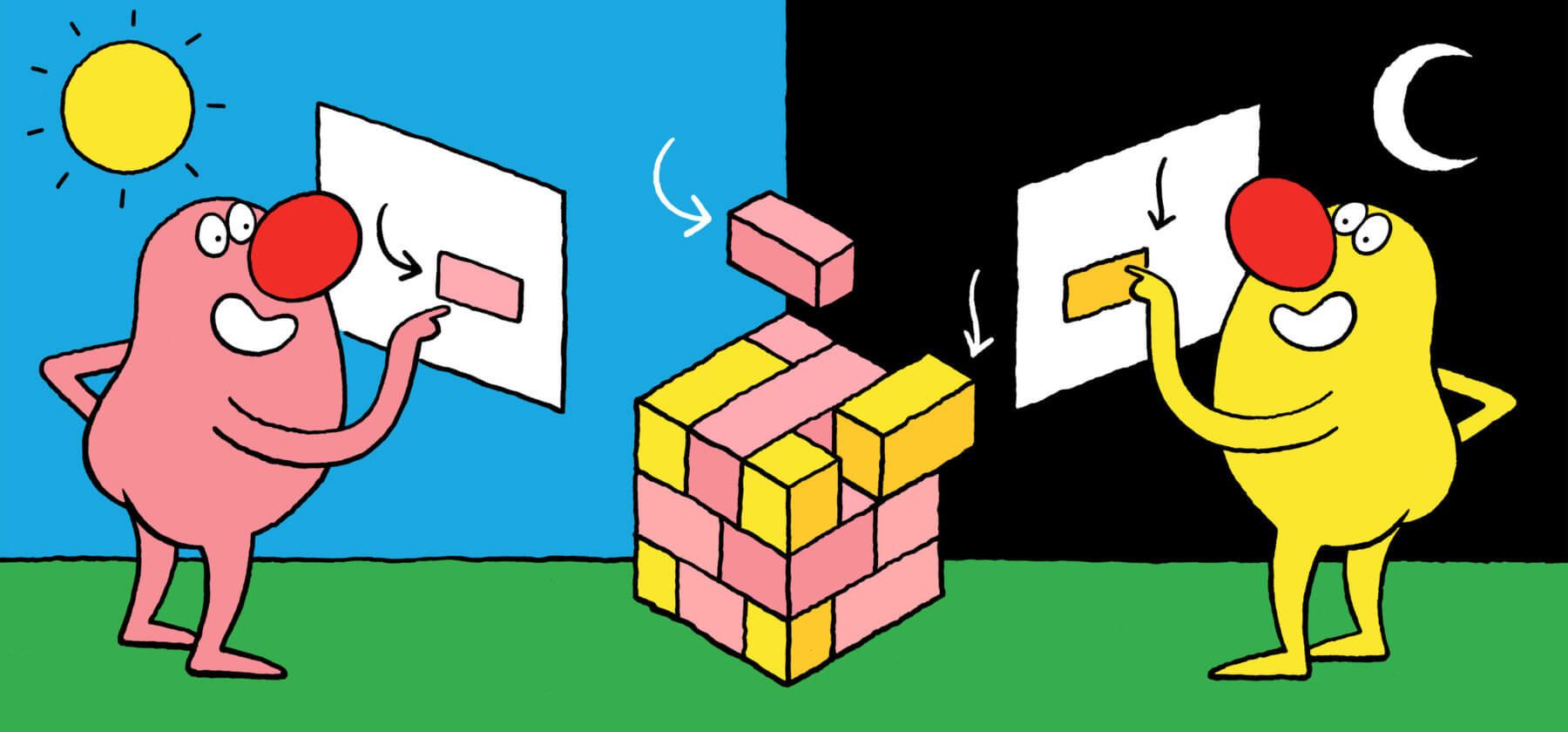

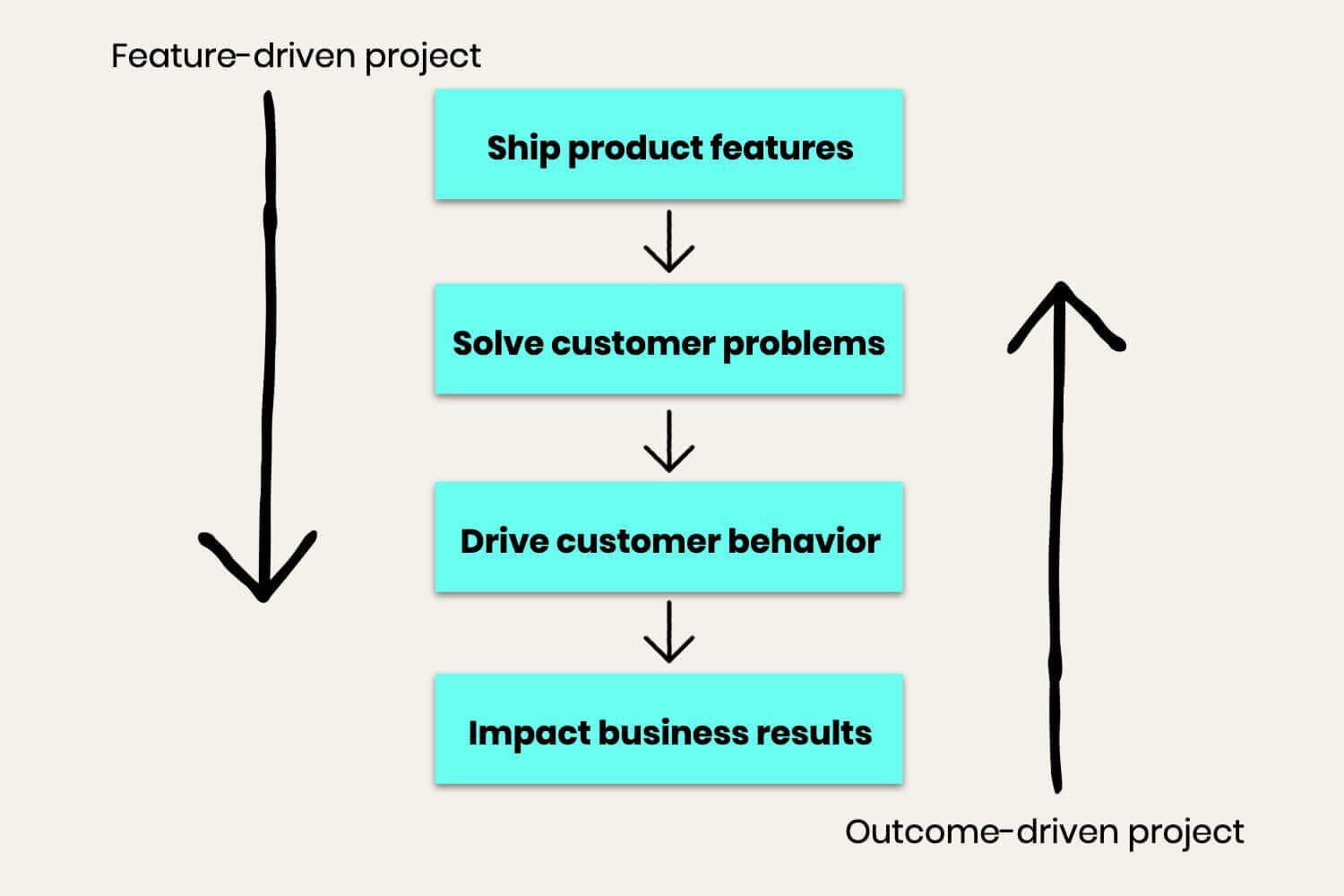

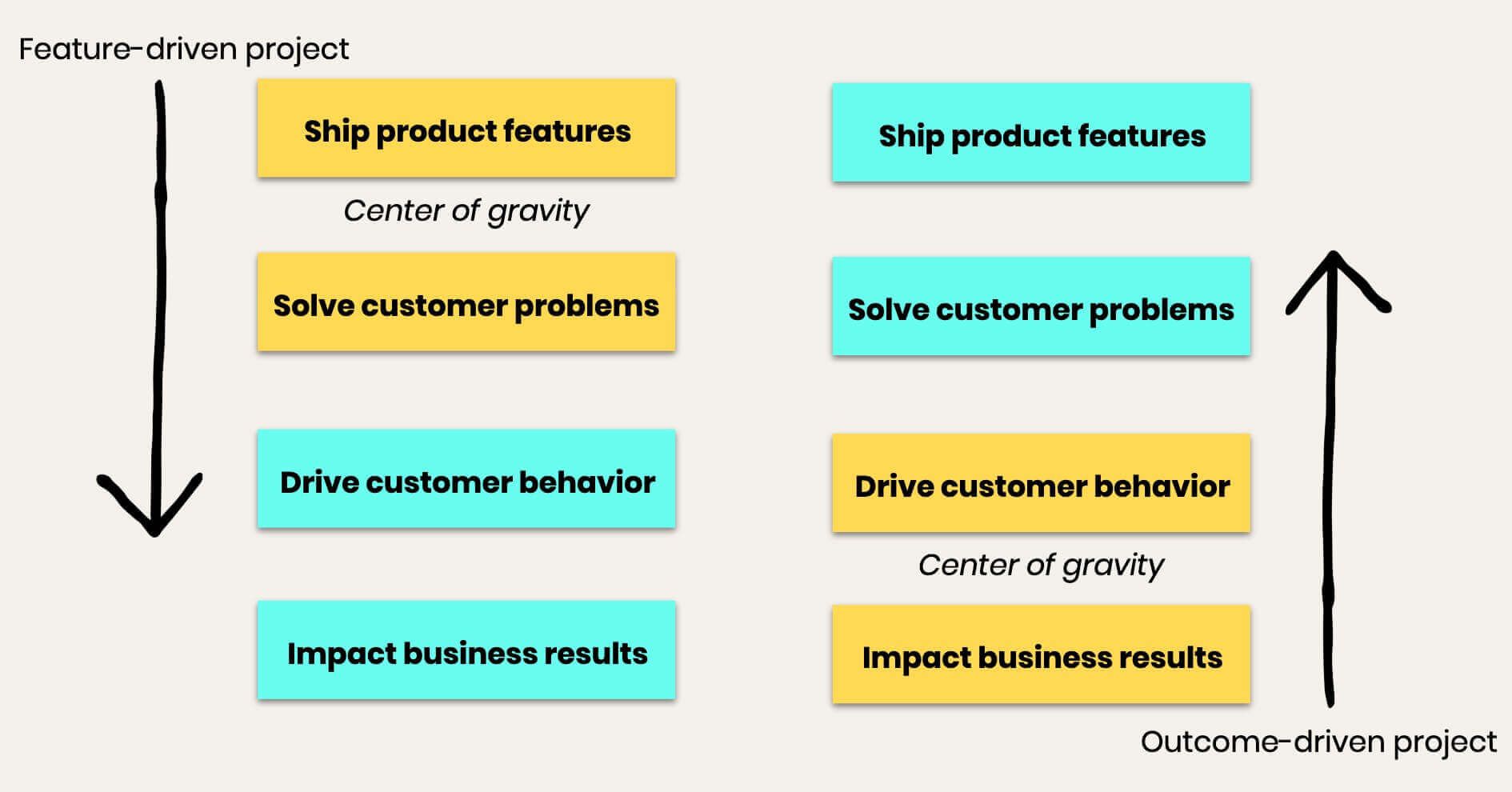

The beauty in this framework is that it actually supports two fundamentally different approaches to building product: feature-driven projects and outcome-driven projects. But only after much effort and experimentation did we realize how fundamentally different the two approaches are – as we discovered, one is not simply the reverse of the other.

Feature-driven projects

Feature-driven projects are the most common approach to building product. The features could come from from feature requests from your existing customers, or product gaps that your sales team are encountering as they try to close deals. Or the feature could be a new idea or big bet your team is really excited about.

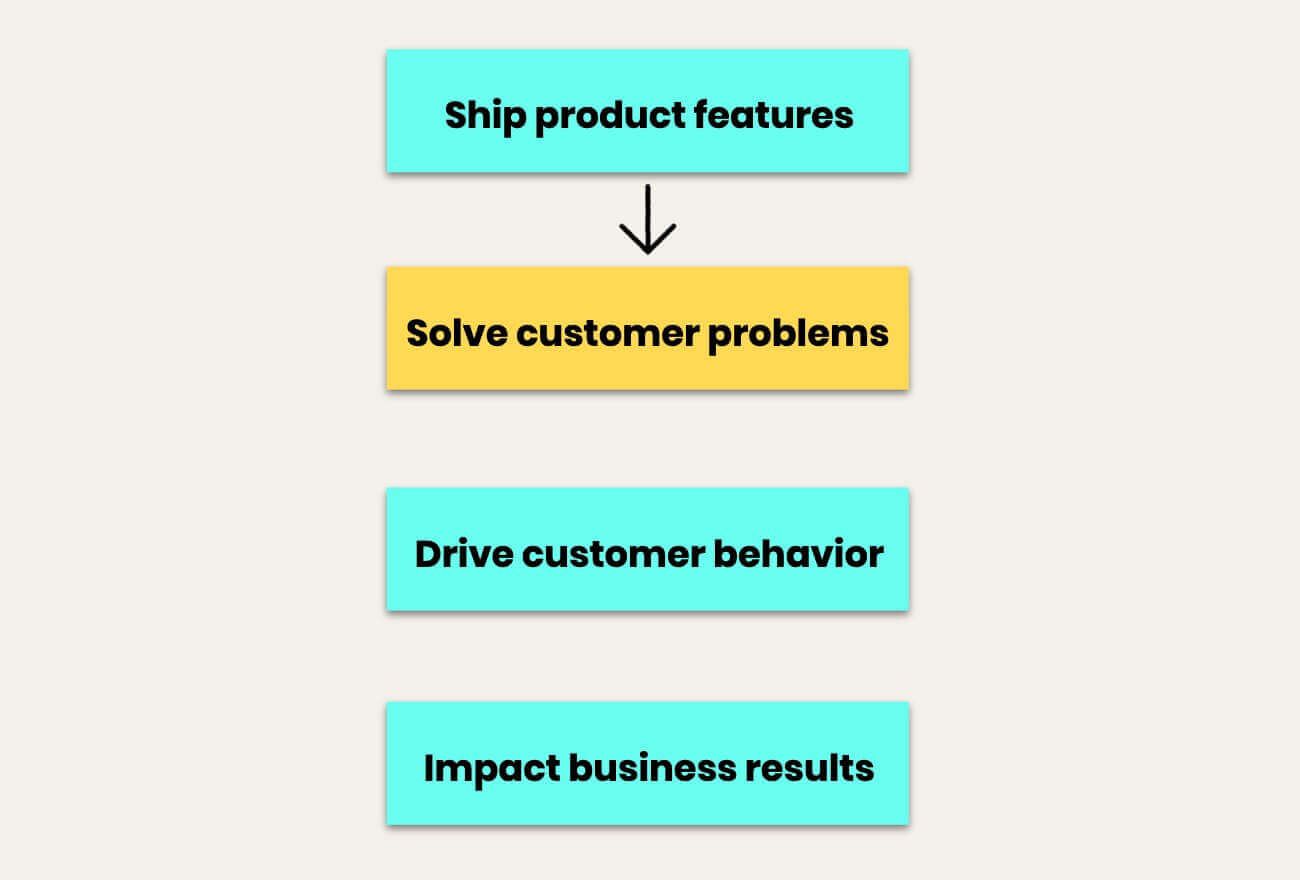

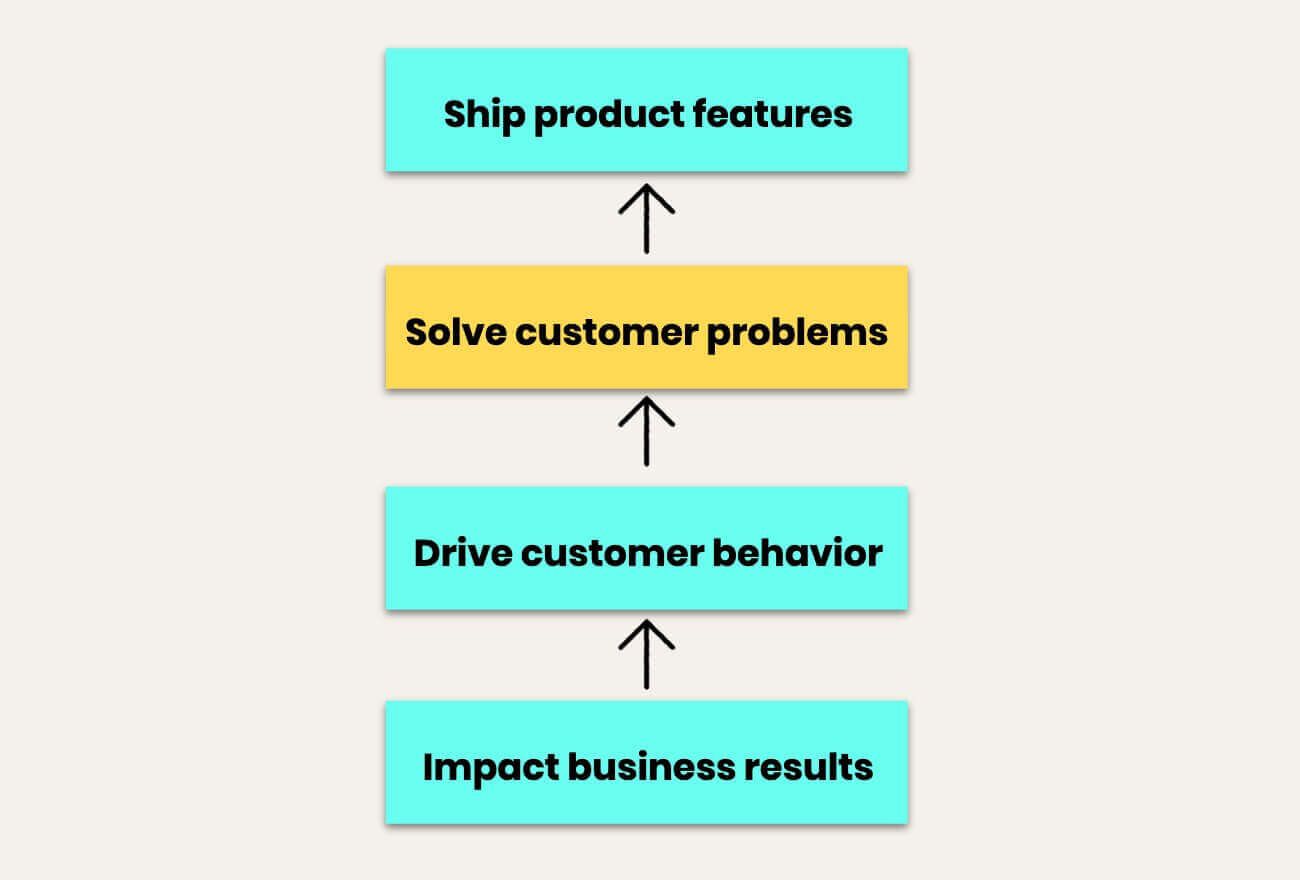

Whatever the source of the feature, the key point is one we’ve banged on about numerous times in the past – it’s absolutely critical to understand the actual customer problem to be solved. That’s the first step working your way down the framework:

Paul has written about how important it is to put far more time into problem definition, and it’s our first R&D principle:

Getting a team to rally behind a problem is incredibly empowering, because it unblocks much more creative approaches to a solution, and makes it much more likely those solutions will actually work.

For us, the real challenge comes trying to move further down the framework, towards the outcome end of it.

This is usually the case for feature-driven projects: it’s easy to see if you’re solving a problem through the directly related customer behavior, but very hard or even impossible to tie this to higher-level customer behaviors and business results. To cite the Inbox apps example again, we could see the effect of the new feature by looking at usage and adoption rates over time, but identifying the impact of this specific change on response times and thus retention was much harder.

“By using measurable customer behaviors as a proxy for business results, you create a much faster feedback loop for your R&D teams”

Higher-level customer behavior metrics, such as average response time, and business results, such as retention, have many variables impacting on them. As a result, it’s much more difficult to prove that a change in one of those variables resulted in changes to the business metric without a very lengthy A/B test. In addition, business metrics tend to be lagging indicators, which means that the feedback loop from testing to learning is often too slow to be of practical use to a team.

To get around this issue, you need to use what former Netflix head of product Gibson Biddle calls proxy metrics, which are stand-in measures for your actual business metrics. By using measurable customer behaviors as a proxy for business results, you create a much faster feedback loop for your R&D teams.

But still, the team really has no idea or even reasonable estimate of how Inbox apps impacted our business results. This project, like many feature-driven projects, is stuck further up the framework stack.

The lesson is that it’s really hard to get a feature-driven project to extend all the way down the stack. Identifying proxy metrics helps to accelerate progress.

Outcome-driven projects

The alternative approach is to start with a desired outcome. Indeed, this is a common situation for teams that are focused on growth, but as a traditionally product-led company, this approach wasn’t the most natural fit for us.

For instance, last year we asked a team to “improve Inbox seat expansion.” Seats are one of the primary ways we charge our customers, so really this was the same thing as saying “expand our Inbox revenue for existing customers”.

“The starting point was so alien that the team basically had no idea how to even think about the project”

It sounds obvious, but no team had ever looked at this before, and since a large percentage of our revenue came from this source, it seemed like a big opportunity. And it was totally wide open – there were zero beliefs about how to do this, it was completely up to the team. So it seemed like an ideal project to learn how to become outcome-focused.

But it was a flop. The project went nowhere – it didn’t even get off the ground. The starting point was so alien that the team basically had no idea how to even think about the project. 🤷♀️

And it took us a while to understand why. The problem was we had unintentionally tried to skip a step in the framework:

We hadn’t done the hard work to figure out what customer behavior actually mapped to Inbox seat expansion, or identified problems that were preventing customers taking these actions. And this project was for a team that was used to being grounded in customer problems, so this starting point basically put them in limbo.

That measurable customer behavior is the same thing as proxy metrics, which are stand-in measures for your actual business metrics.

Without clearly understanding what’s preventing users from taking the actions you want them to take, a team ends up hypothesizing about what the problem could be and designing features to solve this hypothesized problem. This is a common process for teams new to working on outcome-based projects, and the risk is that without first validating the hypothesized problem, you’re essentially gambling with your product development.

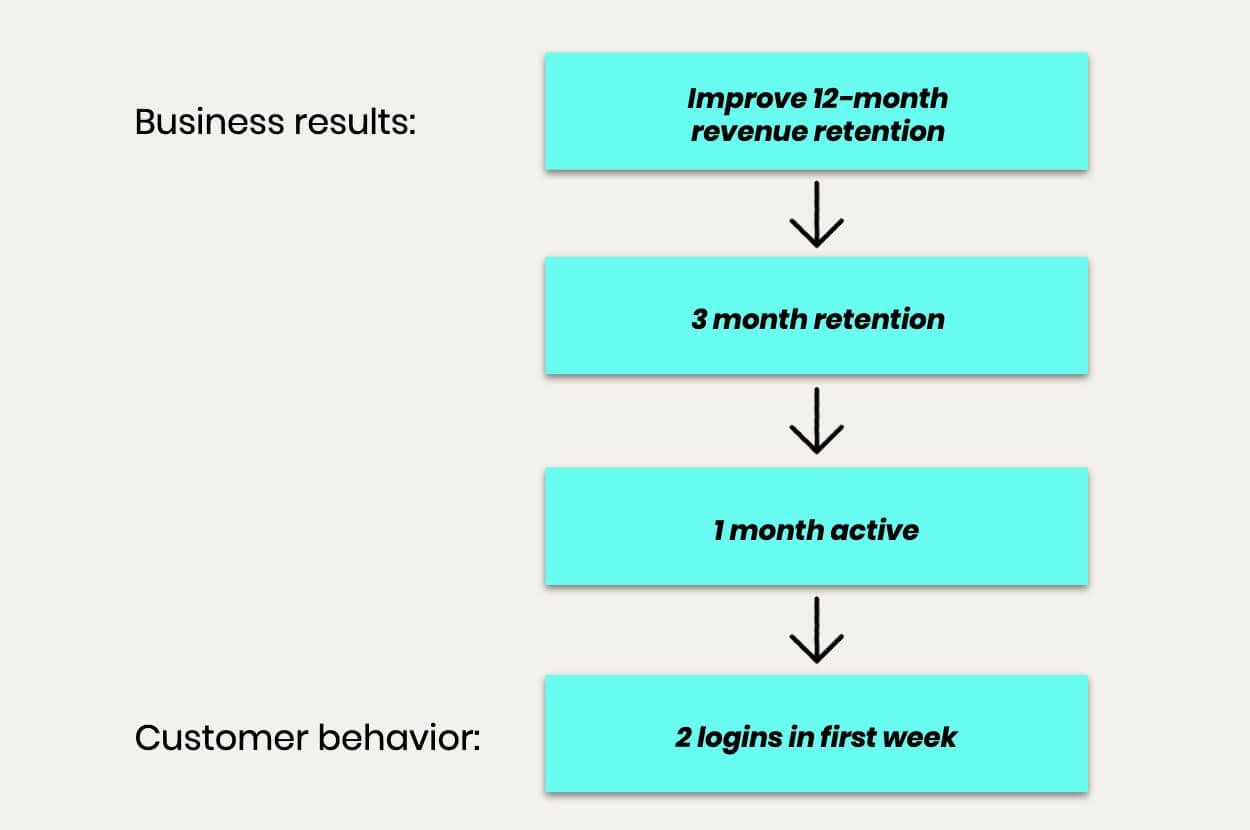

Here’s a real example of this that our Onboarding team recently worked through. They were focused on improving a key business metric: 12-month net revenue retention. They had to do some heavy analysis to keep mapping this up to a measurable customer behavior, and the result was kind of like a daisy-chain of links:

Now the key here is you need strong evidence that the designated customer behavior actually maps to business results. I mean, just imagine asking a team to get customers to log in twice in their first week. Who’s going to believe that this is the secret key to long-term retention? But once you’ve established a strong link with proper analytics, then your team can focus solely on that metric without worrying about whether they’re wasting their time.

So here’s one hidden secret about moving to outcomes: finding your proxy metrics can be really hard. As Gibson says: “Isolating the right proxy metric sometimes took six months. It took time to capture the data, to discover if we could move the metric, and to see if there was causation between the proxy and retention. Given a trade-off of speed, and finding the right metric, we focused on the latter. It’s costly to have a team focused on the wrong metric.”

Even worse, that metric is often useful for only one team. So you have to repeat that process again for different areas of your product.

Okay, so now we have our proxy metric – getting brand new customers to log in twice in their first week. We’re still not ready to dive into design and build. We have to resist the temptation to skip a step:

You need to do research to understand what problems prevent customers from doing what you hope they do. For outcome-driven projects, it’s actually a huge temptation to skip this step and jump straight to hypotheses. Don’t do it – you’ve got to talk to customers.

Only once you’re clear on the customer problems can you go into experimentation mode – generating hypotheses and doing fast experiments to see if they impact customer behavior.

So the key takeaway here is in order to actually run genuinely outcome-driven projects you need to do the deceptively hard work of finding valid proxy metrics that your team can actually change, and only then can you work your way up the framework.

Acknowledge your project’s center of gravity

A way to think about this is a project’s center of gravity. For feature-driven projects, it’s at the top of the stack; for outcome-driven projects, it’s at the bottom.

For outcome-driven projects, you will be clear about the results that you want, but it always requires an uncertain leap of faith that you’ve identified the right problem to solve in order to drive that result. For feature-driven projects the issue is reversed: you can be confident that you’ve identified a real problem, but it’s harder to determine whether this problem is significant enough to drive the behaviors that impact business results.

“We could have put in a lot more analytics effort to try to correlate successful searches with business results, but there are a lot of daisy chain steps along that path”

Let’s take another simple example to illustrate this point. A separate Inbox feature we released this summer was improvements to search. This was an archetypal feature-driven project: the frequency of customers both using search and reporting frustrations made us confident that this was a problem worth solving, and we were confident we knew what improvements to make. So we did.

We could have put in a lot more analytics effort to try to correlate successful searches with business results, but there are a lot of daisy chain steps along that path. Instead we followed our usual path, beta tested it, iterated based on quantitative and qualitative results, and shipped it. We’re confident we’ll see dramatically fewer requests to fix Inbox search, but we have to acknowledge that we have no idea what business impact this project will have.

And for a project like this, that’s okay.

There’s another route that might have pointed us to improve Inbox search. Suppose we found that Inbox efficiency reliably mapped to customer retention. And through research we might have found that search was one of the hurdles for some customers. We would have tried a fast experiment to see if search improvements actually made teammates measurably more efficient. We might have subjectively thought search was better, but if it didn’t speed up teammates’ work then it wasn’t worth pursuing, so we’d opt for some other focus area. That’s the outcome-based approach.

Feature-driven vs outcome-driven projects

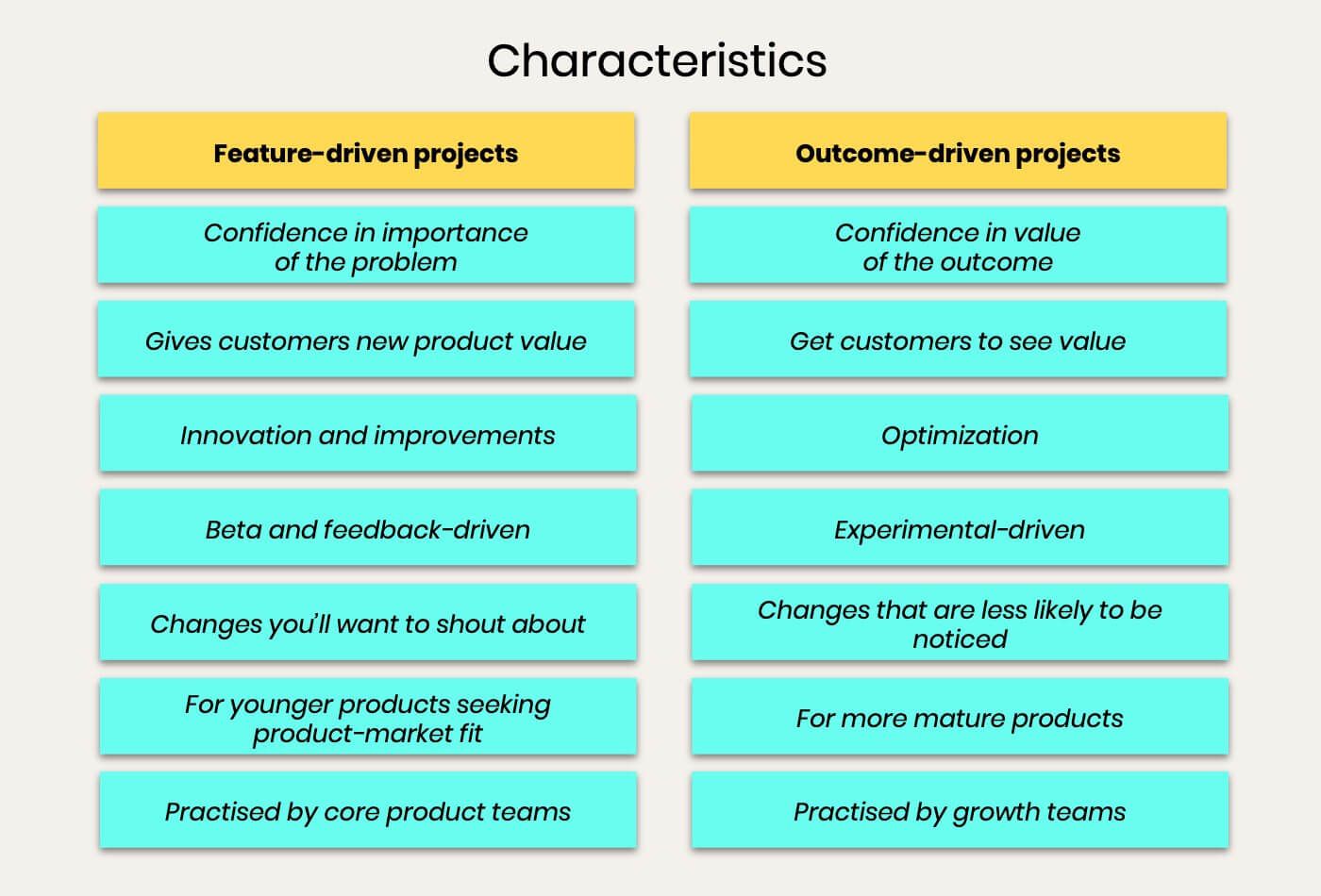

So where does all this leave us? There are some useful rules of thumb when it comes to thinking about the two different approaches, some broad characteristics that mark out the distinctions between them.

Bear in mind these are just generalizations, and you can find plenty of exceptions to each of these. But it’s also important to realize these two approaches can and should co-exist.

When we started to try to evolve R&D to become truly outcome-focused, we thought we had to change the way we worked for every project – that every project had to become outcome driven.

“We are trying to get a better balance of projects, so we’re spending a lot of time and energy figuring out the right proxy metrics”

But what we’ve learned is that it’s far harder to do this than we anticipated. Equally, we’ve learned that our feature-driven approach is still valid, particularly when anchored on customer problems.

Ultimately, we are trying to get a better balance of projects, so we’re spending a lot of time and energy figuring out the right proxy metrics, which is crucial for really understanding the relationship between the different steps in the framework.

Both ways of working are important. Both ways fit inside the same framework. But don’t think you can casually jump from one to the other. Respect the center of gravity of each project type, and be sure to adapt how you approach each type of project to its inherent risks.