We recently released our next generation support chatbot, Resolution Bot. It’s designed to provide instant answers to customers right in the Intercom Messenger.

It was the culmination of a huge amount of work by multiple product teams, vast amounts of research by our machine learning experts, and hands-on collaboration with the support team. We chronicled the initial training process of Resolution Bot to give you an inside look into how support teams can play a pivotal role in product development.

Kicking off the training process

The development of Resolution Bot also saw a very significant involvement from our team here at Intercom – the Customer Support team. We have long been a valuable source of customer feedback to the Product team. We also take part in the QA process and beta test new feature rollouts, providing direct feedback and guidance.

This project, however, marked an entirely new level of collaboration between our teams. Resolution Bot sets out to improve and optimize the support experience, so designing it meant that feedback from the teams who would use it most was essential. Its development benefited massively from our input, as we aim to be product experts and understand our customers’ top questions from helping them day in, day out.

“We provided 100 answers to our most commonly asked questions in order to help train Resolution Bot”

From the very early stages of the project, we worked closely with the Product team to provide feedback on the user experience, and to really help shape this new product as it developed.

Central to our involvement was a significant challenge – providing 100 answers to our most commonly asked questions, in order to help train Resolution Bot and make sure it was ready to go from day one.

Finding the right answers

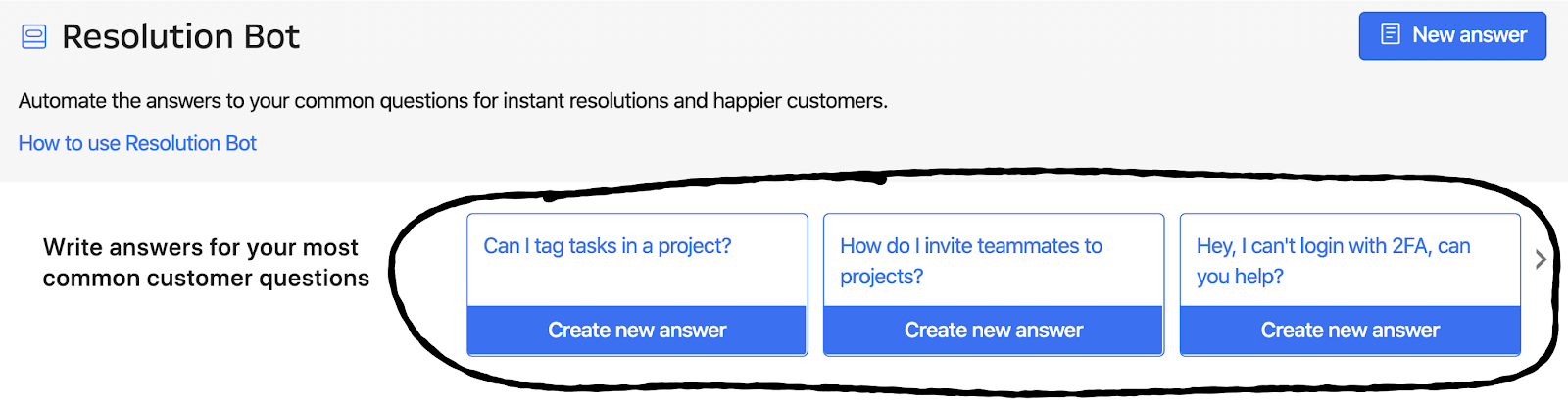

One of Resolution Bot’s most powerful features is its ability to analyze your past conversations and surface suggestions for your most commonly asked questions. But starting from scratch, we needed to collate a pool of answers to help train it and gauge its effectiveness.

Training a chatbot can feel overwhelming, especially if you’re not sure where to start. That’s why we started with 100 answers. We knew that 100 answers would allow us to gain a strong understanding of the ins and outs of the product.

It would also allow us to really dig into the data behind these answers. Above all, we needed to know how often the answers successfully resolved questions automatically. A sample size of 100 answers allowed us to adequately assess the impact that Resolution Bot could have for a support team like Intercom’s.

We thought, if Resolution Bot could resolve even 10% of our customers’ frequently asked questions, this would free up a serious amount of time. As a result, our team would be enabled to focus less on the frequently asked questions and devote more time to our customers’ tougher problems, resolve the more technical investigations, and help new customers get set up as quickly as possible.

“Since re-launching Resolution Bot, we learned that the chatbot instantly solves 33% of common support questions, and on average has helped businesses save their customers 109 hours over the last year”

We were right. Since re-launching Resolution Bot, we learned that the chatbot instantly solves 33% of common support questions, and on average has helped businesses save their customers 109 hours over the last year.

Building a thoughtful testing process

Our first approach to testing Resolution Bot was to build a dedicated group of Customer Support teammates who would create answers within Resolution Bot on the main Intercom workspace, test the product to see what worked and more importantly what didn’t, and provide detailed feedback to the product team.

For our dedicated team we enlisted a group of some of our most tenured team members, spanning across four offices around the globe, who all boasted an impressive and deep knowledge of Intercom and our products.

We worked together to identify and build out our answers, supplying Resolution Bot with suggested answers while it was being tested. Communicating regularly, we reviewed the suggested answers to ensure they were firing properly and sending out the correct information.

We were, in a sense, helping to train Resolution Bot as it became smarter and more useful.

Questioning our intuition

As we collated 100 answers, we discovered something interesting. At first, we relied on our own intuitive sense of what Intercom customers’ most frequently asked questions were. We were the experts in this field, so it seemed like a natural way to start.

“The anecdotal approach provided very different answers from the analytical”

However, it transpired these questions diverged markedly from what the data revealed. The anecdotal approach provided very different answers from the analytical. For instance, teammates who were involved in particular projects were naturally prone towards suggesting questions concerning those topics.

And it also highlighted some understandable blindspots – one example of a frequently asked question that we missed was “Where do I find my closed conversations?” This is second nature to the support team, and it didn’t occur to us that it might be confusing to a customer.

When our analytics team provided us with conversation “clusters” (conversations that coalesced around similar questions, themes or topics), we were able to identify the most glaring omissions. We used the clusters as a platform to clearly outline questions for which answers were needed.

Using hard data as a springboard from which to craft answers super-charged the product, and saw us hit our goal of 100 live answers within two weeks.

The next step was to start monitoring the answers we created, optimize them for quality, and fix the ones that simply weren’t working.

We took a close look at answers that were misfiring, and created new answers to solve the issue. For example, an answer about how to create messages may have fired for a question about creating users, in which case we simply provided a correct answer.

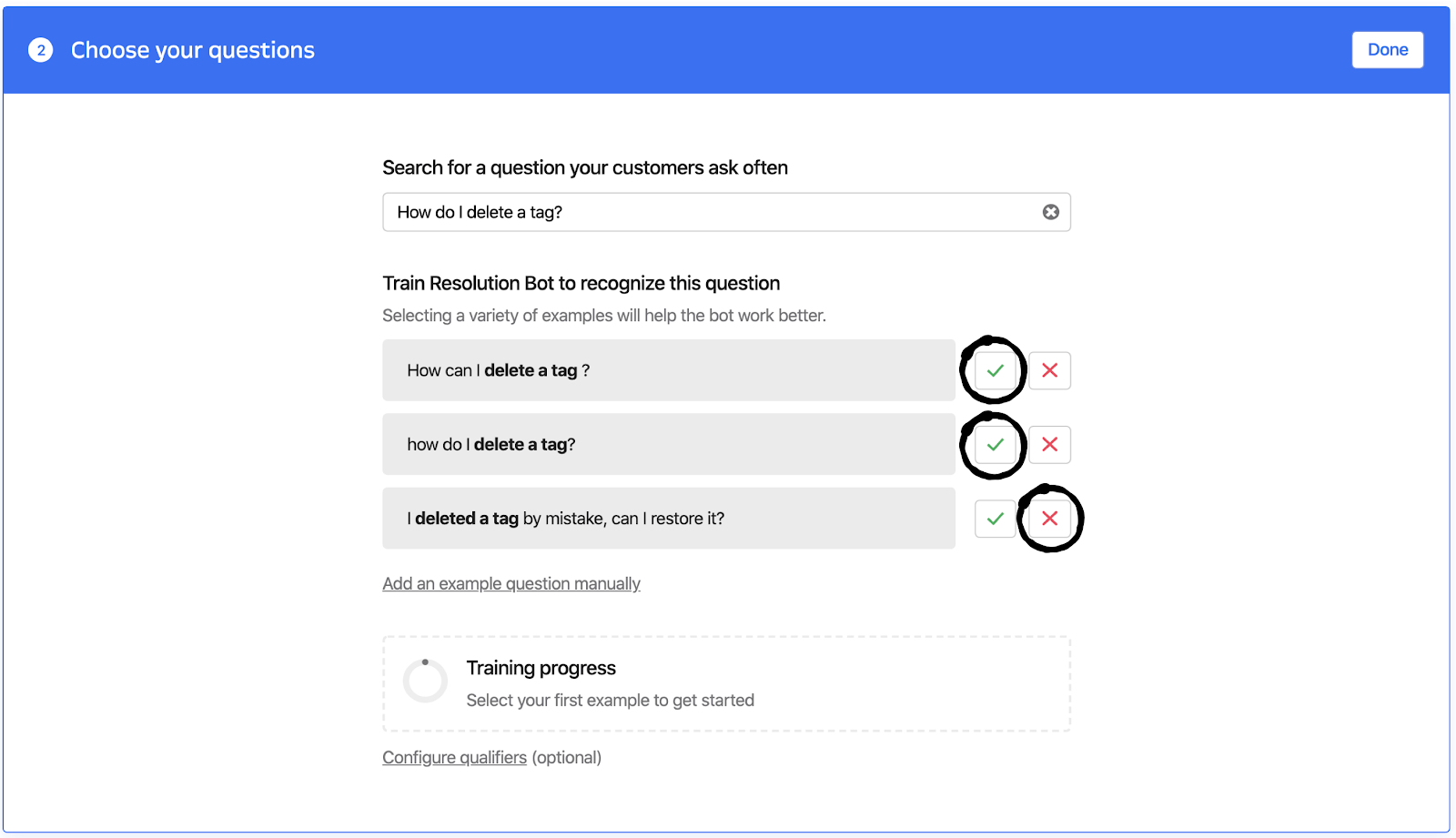

To diagnose why some answers misfired, we focused on the supplied examples. Was there enough meaningful variation between the answers? “Meaningful variation” means having different ways of phrasing important words within a question. The difference between “Can I?” and “How do I?” is not a meaningful variation. By contrast, the difference between “admin” and “teammate” is a very meaningful variation indeed.

Here we can see how to train Resolution Bot to recognize certain questions on a given issue. We had to ensure that, within the examples we fed to Resolution Bot, there were multiple variations of different phrasings for the same issue, while precluding questions that shared words but differed in meaning:

A final tool available to us was qualifiers – qualifiers allows us to clearly define the phrases that should be present in a customer’s question in order for an answer to fire.

Cross-functional development

We didn’t just focus our work within the Customer Support team, but also with our Product Education team as well as our Sales team, helping them identify and convey the value of Resolution Bot to leads and customers.

“Our support team now has more time available to focus on the meatier questions”

By spending time testing Resolution Bot during its initial evolution, the Customer Support team was set up for success in time for the launch, and the benefit was two-fold – we were able to hit the ground running in terms of supporting our customers as they adopted Resolution Bot, and we were able to get great value from Resolution Bot ourselves.

Keeping Resolution Bot healthy

We have now established a special Resolution Bot team to focus on curating answers and maintaining and optimizing these answers. With the re-launch of Resolution Bot, this process is even more effective, because Resolution Bot allows us to track what answers are underperforming so that we can improve them.

To create a fantastic answer from the get-go, we’re also now able to copy an answer from a past conversation that we know resolved the customer’s question immediately. Using Resolution Bot ourselves means our support team now has more time available to focus on the meatier questions and to help our customers in an even more valuable way.

Our collaboration with the product team drove significant growth in the evolution of Resolution Bot. If you work on a support team, your feedback plays an essential role in the success and development of new products. If you haven’t, reach out to your product team and see how you can help.

That collection of 100 answers we started out with two years ago has evolved and grown. With over 55k conversations resolved immediately and over 300 live answers, Resolution Bot, it’s fair to say, is coming into its own as a crucial piece in the next generation of support.

Extra resources:

- How Resolution Bot works

- How to create an effective answer for Resolution Bot

- Train Resolution Bot to provide more resolutions

- Measure Resolution Bot’s performance